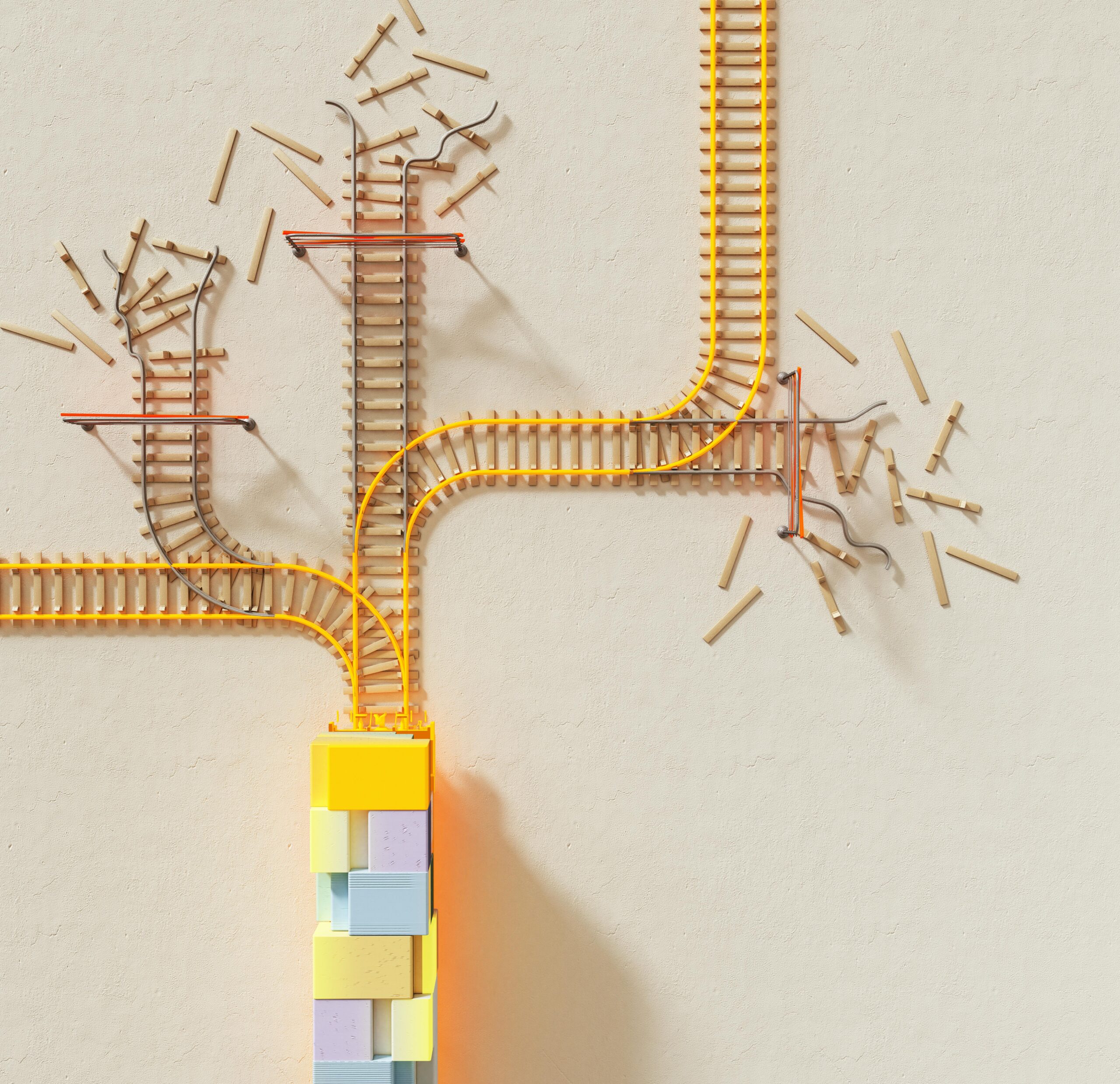

Manual override mishaps occur when human intervention in automated systems inadvertently introduces errors, undermines efficiency, and creates cascading failures across operational workflows.

🚨 The Paradox of Human Control in Automated Systems

In an era where automation dominates industrial processes, healthcare systems, aviation, and manufacturing, the ability to manually override automated systems remains a critical safety feature. Yet this very capability often becomes a vulnerability. When operators intervene in automated processes, they bring with them cognitive biases, incomplete information, stress-induced decision-making, and the potential for human error that automation was designed to eliminate.

The relationship between human operators and automated systems exists in a delicate balance. While automation excels at consistency, speed, and processing vast amounts of data, humans provide judgment, creativity, and adaptability in unprecedented situations. However, when these two elements clash during manual override scenarios, the results can range from minor inefficiencies to catastrophic failures.

📊 Understanding the Mechanisms Behind Override Errors

Manual override errors typically emerge from several interconnected factors that compromise the decision-making process. The complexity of modern automated systems means that operators may not fully understand the downstream consequences of their interventions. This knowledge gap creates a fertile ground for unintended errors.

Cognitive Load and Decision Fatigue

Operators facing system anomalies experience elevated cognitive load, particularly when time pressure demands immediate action. Decision fatigue accumulates throughout shifts, degrading the quality of override decisions. Research in human factors engineering demonstrates that operators make substantially more errors during the final hours of extended shifts, precisely when critical override decisions may be required.

The mental model operators hold of system functionality may diverge significantly from actual system behavior, especially in complex integrated environments. This model mismatch leads operators to make interventions based on incomplete or incorrect assumptions about system state and behavior.

Mode Confusion and Automation Complacency

Mode confusion occurs when operators misunderstand which automated mode the system is operating in, leading to inappropriate interventions. This phenomenon proved particularly dangerous in aviation accidents where pilots intervened believing the aircraft was in one mode when it actually operated in another.

Automation complacency represents the opposite problem—operators become so reliant on automated systems that their monitoring skills atrophy. When abnormal situations require intervention, they lack the situational awareness necessary for effective manual override.

⚙️ Real-World Consequences Across Industries

Manual override mishaps manifest differently across sectors, but share common patterns of human-automation interaction failure. Examining these industry-specific challenges reveals universal lessons about intervention risks.

Aviation Industry Vulnerabilities

Commercial aviation provides numerous case studies in override mishaps. The Air France Flight 447 disaster demonstrated how pilot intervention during automated flight, combined with confusion about system modes and instrument readings, led to catastrophic failure. Pilots manually overrode the autopilot system based on misinterpreted data, entering a stall condition from which they could not recover.

Modern aircraft incorporate multiple layers of automation that pilots can override at various levels. However, these override capabilities create complexity that demands extensive training and current proficiency—requirements that prove challenging to maintain in an industry where automation handles most routine operations.

Manufacturing and Process Control

In manufacturing environments, manual overrides of automated processes frequently introduce quality defects, equipment damage, and safety hazards. When operators bypass safety interlocks or automated quality controls, they often do so with intentions to improve efficiency or meet production targets, inadvertently creating far more costly problems.

Chemical processing facilities face particularly acute risks from manual intervention. Automated systems manage complex thermodynamic processes where variables interact in non-linear ways. Manual adjustments based on single variable observations can trigger cascading failures across the entire process system.

Healthcare Technology Challenges

Medical device alarms and automated clinical decision support systems face frequent manual overrides. Alarm fatigue leads healthcare providers to disable or ignore automated alerts, occasionally missing critical warnings. Conversely, override of appropriate automated recommendations based on incomplete clinical reasoning introduces medication errors and treatment complications.

Infusion pumps, ventilators, and monitoring systems all incorporate manual override capabilities essential for individualized patient care. However, these same capabilities enable errors when clinicians misunderstand device programming or fail to account for automated safety features they’ve bypassed.

🔍 The Efficiency Impact of Manual Interventions

Beyond safety concerns, manual overrides significantly impact operational efficiency, often in ways that extend far beyond the immediate intervention. Understanding these efficiency implications helps organizations make informed decisions about when manual intervention truly adds value versus when it undermines system performance.

Workflow Disruption and Recovery Time

Automated systems optimize workflows based on comprehensive data analysis and predictive algorithms. Manual interventions disrupt these optimized sequences, requiring system recalibration and rebalancing that consumes time and resources. The recovery period following manual override often proves longer and more costly than the original problem the intervention aimed to solve.

In logistics and supply chain management, manual route changes or inventory adjustments ripple through interconnected systems, forcing recalculation of dependent variables and creating inefficiencies that compound over time. A single manual intervention in a complex supply network can generate thousands of micro-adjustments across the system.

Data Integrity and Analytics Degradation

Manual overrides introduce data anomalies that compromise the machine learning algorithms and predictive analytics that modern systems rely upon. When operators bypass automated processes without proper documentation, they create data gaps and inconsistencies that reduce the accuracy of future automated decision-making.

This degradation becomes particularly problematic in systems that use historical data for continuous improvement. Manual interventions that aren’t properly logged appear as unexplained variations in system performance, corrupting the data foundation that automated optimization depends upon.

🛡️ Designing Systems That Minimize Override Risks

Reducing manual override mishaps requires thoughtful system design that acknowledges human limitations while preserving necessary intervention capabilities. The goal isn’t eliminating human involvement but rather creating interaction models that support effective human-automation collaboration.

Graduated Authority and Confirmation Requirements

Implementing graduated levels of override authority based on consequence severity helps prevent casual interventions in critical systems. Low-risk overrides might proceed with single-operator authority, while high-consequence interventions require multi-person confirmation, supervisor approval, or mandatory delay periods that allow for reflection.

Confirmation requirements should incorporate forcing functions that require operators to explicitly acknowledge the consequences of their intervention. Simple yes/no confirmations prove insufficient; effective designs require operators to demonstrate understanding of what their override will change and what systems will be affected.

Enhanced Transparency and Mental Model Alignment

System interfaces must provide operators with comprehensive visibility into automated decision-making logic. When operators understand why automation is taking specific actions, they make better judgments about when intervention is truly necessary versus when apparent anomalies fall within normal automated operation.

Training programs should emphasize building accurate mental models of system behavior under various conditions. Simulation exercises that expose operators to rare scenarios help maintain the skills necessary for effective intervention when automated systems encounter truly unprecedented situations.

📱 Technology Solutions for Intervention Management

Modern technology offers tools for managing manual override risks while preserving operational flexibility. These solutions balance automation benefits with human judgment in ways that minimize error introduction.

Intelligent Decision Support Systems

Advanced decision support systems provide operators with comprehensive consequence analysis when they contemplate manual intervention. These systems model the downstream effects of proposed overrides, alerting operators to potential cascading failures or efficiency impacts they might not have considered.

Real-time risk assessment algorithms evaluate override requests against historical patterns, current system state, and predictive models to flag interventions that deviate significantly from successful past practices. This doesn’t prevent overrides but provides operators with information necessary for informed decision-making.

Automated Documentation and Audit Trails

Comprehensive logging systems automatically capture every manual intervention, including operator identity, timestamp, system state before and after override, and stated justification. This documentation serves multiple purposes: accountability, learning from past interventions, and maintaining data integrity for analytical systems.

Post-intervention analysis tools leverage this documentation to identify patterns in override behavior, revealing systematic issues that training or system redesign should address. Organizations that regularly review override patterns often discover that many interventions address the same recurring automated system limitations that targeted improvements could eliminate.

👥 Organizational Culture and Override Behavior

Technical solutions alone cannot eliminate manual override risks. Organizational culture profoundly influences when and how operators choose to intervene in automated processes. Cultivating a culture that supports appropriate intervention while discouraging unnecessary overrides requires deliberate effort.

Just Culture and Error Reporting

Organizations implementing just culture principles see more honest reporting of override mishaps, enabling systemic learning. When operators fear punishment for intervention errors, they conceal mistakes, preventing organizational learning and allowing the same errors to recur. Just culture distinguishes between honest mistakes, at-risk behavior, and reckless actions, responding appropriately to each.

Encouraging operators to report not just errors but near-misses and intervention decisions that fortunately didn’t result in problems provides early warning of systematic issues. This proactive reporting culture helps organizations address override risks before they manifest as actual failures.

Empowerment and Responsibility Balance

Operators need clear guidance on when manual intervention represents appropriate judgment versus inappropriate undermining of automated systems. Organizations should explicitly define scenarios where intervention is expected, scenarios where it’s prohibited, and gray areas where operator judgment should prevail after careful consideration.

Regular training scenarios that present ambiguous situations help operators develop the judgment necessary for these gray area decisions. Case study discussions of past override incidents—both successful interventions and mishaps—provide valuable learning without the consequences of real-world errors.

🔮 Future Directions in Human-Automation Interaction

Emerging technologies and evolving design philosophies promise to transform how manual override capabilities integrate into automated systems. Understanding these trends helps organizations prepare for changing interaction paradigms.

Adaptive Automation and Dynamic Function Allocation

Adaptive automation systems dynamically shift control between human operators and automated systems based on current conditions, operator state, and task demands. Rather than requiring operators to explicitly override automation, these systems proactively transfer control when human judgment becomes necessary, then resume automation when appropriate.

Physiological monitoring of operator state—measuring fatigue, stress, and cognitive load—enables systems to adjust their interaction models in real-time. When operators show signs of diminished capacity, systems can increase their level of automated support or require additional confirmations before processing override requests.

Explainable AI and Transparent Decision-Making

As artificial intelligence increasingly drives automated decision-making, explainability becomes crucial for effective human oversight. AI systems that can articulate their reasoning enable operators to make informed override decisions based on genuine understanding rather than intuition or incomplete information.

Transparent AI systems that expose confidence levels in their recommendations help operators identify situations where automated decisions may be suboptimal, warranting human intervention. This transparency transforms the override decision from adversarial human-versus-machine competition into collaborative decision-making.

💡 Practical Strategies for Reducing Override-Related Errors

Organizations can implement concrete strategies to minimize manual override mishaps while maintaining necessary intervention capabilities. These practical approaches address both technical and human factors dimensions of the challenge.

First, conduct regular audits of override frequency and outcomes to identify patterns suggesting systematic problems. High override rates in specific system functions often indicate automation design flaws that modifications could address, reducing the need for human intervention.

Second, implement structured override protocols that require operators to document their reasoning before executing interventions. This brief pause creates opportunity for reflection and helps operators recognize when emotional reactions rather than rational analysis drive their decision.

Third, establish feedback loops that inform operators about the consequences of their interventions. When operators see how their overrides affected system performance—both positive and negative outcomes—they develop better calibration of when intervention adds value versus when it introduces problems.

Fourth, invest in simulation-based training that exposes operators to diverse scenarios requiring override decisions. Realistic simulation builds the pattern recognition and decision-making skills necessary for effective intervention while allowing operators to learn from mistakes without real-world consequences.

Fifth, design override interfaces that naturally slow down the intervention process for high-consequence decisions. Strategic friction—requiring manual entry of values rather than simple button clicks, for example—reduces impulsive overrides while still permitting deliberate, considered interventions.

🎯 Finding the Optimal Balance

The fundamental challenge in managing manual override capabilities lies in balancing competing priorities. Organizations need human judgment for exceptional circumstances while minimizing opportunities for error introduction. This balance point shifts based on industry, specific applications, risk tolerance, and organizational maturity.

Mature organizations recognize that perfect elimination of override errors remains impossible as long as humans remain in the loop. The goal shifts from preventing all errors to building resilient systems that detect and recover from intervention mishaps quickly, minimizing their impact on safety and efficiency.

Continuous improvement processes should regularly reassess the human-automation function allocation, asking whether specific override capabilities remain necessary or whether automation advances have made certain interventions obsolete. As systems mature and automated capabilities expand, previously essential override functions may become unnecessary sources of risk.

The most effective approach treats human-automation interaction as a design problem requiring continuous attention rather than a fixed capability determined during initial system development. Regular evaluation of override patterns, emerging technologies, and operational experience enables organizations to evolve their approach as systems and understanding mature.

Ultimately, manual override mishaps represent not failures of humans or automation individually, but failures of the interaction design between them. By recognizing this fundamental truth, organizations can move beyond blame and toward systematic improvements that preserve human judgment while minimizing error introduction and efficiency degradation. The future belongs to systems that seamlessly integrate human and automated capabilities, leveraging the strengths of each while compensating for their respective limitations.

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.