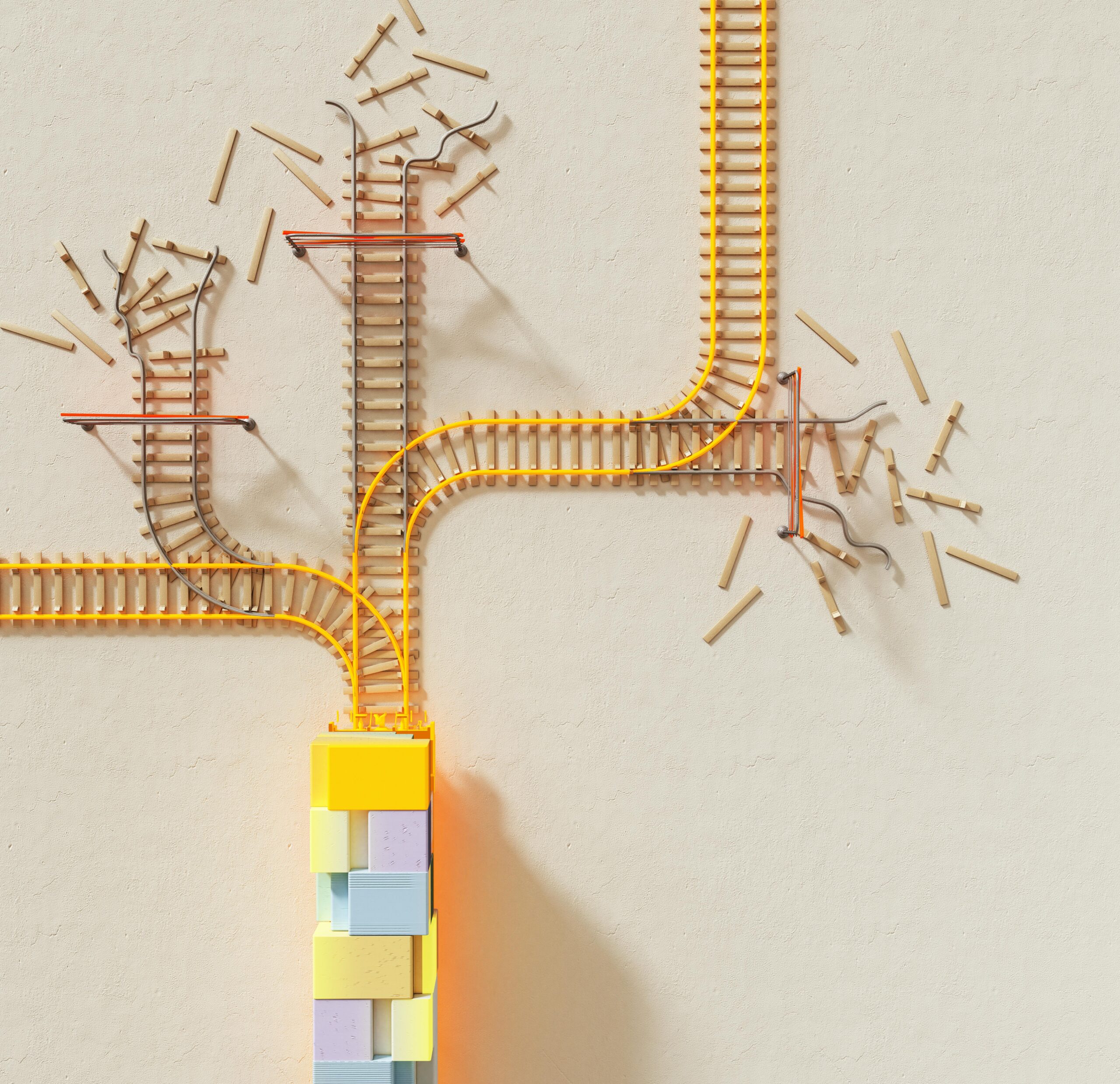

Software systems often hide errors beneath layers of redundancy and fallback mechanisms, creating invisible risks that compound silently until catastrophic failures emerge unexpectedly.

🔍 The Silent Threat Lurking in Modern Software Architecture

In the intricate world of software development, error masking represents one of the most insidious challenges facing engineering teams today. Unlike obvious bugs that crash systems immediately, masked errors operate in the shadows—quietly accumulating technical debt while systems appear to function normally. This phenomenon occurs when defensive programming practices, exception handling, and fault-tolerant designs inadvertently conceal underlying problems rather than addressing them at their root.

The concept of error masking isn’t entirely new, but its implications have grown exponentially as software complexity increases. Modern applications rely on distributed architectures, microservices, and multiple integration points, each introducing potential failure modes. When one component masks an error from another, the problem doesn’t disappear—it simply becomes harder to detect until it reaches a critical threshold where the entire system can no longer compensate.

Understanding the Mechanics of Error Concealment

Error masking typically manifests through several common programming patterns. Try-catch blocks that swallow exceptions without proper logging, default values that substitute for failed operations, and retry mechanisms that persist indefinitely all contribute to this phenomenon. While these techniques serve legitimate purposes in maintaining system availability, they can transform minor issues into major crises when misapplied.

Consider a typical e-commerce platform where payment processing occasionally fails. If the system automatically retries failed transactions without alerting monitoring systems, a gradual degradation in payment gateway performance might go unnoticed. The application continues functioning, customers receive appropriate responses, but behind the scenes, retry attempts accumulate, consuming resources and masking a deteriorating integration.

The Psychology Behind Accepting Hidden Failures

Development teams often embrace error masking unconsciously, driven by competing priorities. Meeting deadlines, maintaining uptime metrics, and avoiding false alarms create incentives to implement “fire and forget” error handling. When systems appear stable on the surface, there’s little pressure to investigate occasional anomalies that don’t trigger immediate customer complaints.

This psychological aspect extends to organizational culture. Teams celebrated for maintaining high availability may inadvertently encourage practices that prioritize apparent stability over genuine system health. The result is a technical landscape where everyone believes operations are running smoothly, even as underlying fragility increases.

⚡ Critical Thresholds: When Masked Errors Explode

Every system has breaking points—resource limits, timeout thresholds, and capacity constraints that, when exceeded, cause cascading failures. Masked errors contribute to reaching these thresholds in unpredictable ways because their cumulative impact remains invisible until the moment of collapse.

Database connection pools provide a clear example. If application code masks connection failures by silently retrying, connections may leak gradually. The application continues serving requests until the pool exhausts completely, at which point the system experiences sudden, total failure. What appeared as reliable operation transforms instantly into complete unavailability, with no warning signs visible to monitoring systems focused on high-level metrics.

Cascade Effects in Distributed Systems

Modern microservices architectures amplify the dangers of error masking through interconnected dependencies. When Service A masks errors from Service B, and Service B masks errors from Service C, the compounding effect creates complex failure modes that defy traditional debugging approaches. Each service appears healthy in isolation, but the system as a whole operates in an increasingly fragile state.

These cascade effects become particularly problematic during peak load periods. Masked errors that remain dormant during normal operation suddenly manifest when traffic increases, resource contention rises, or external dependencies slow down. The system crosses multiple critical thresholds simultaneously, producing failures that seem to emerge from nowhere.

Real-World Consequences of Undetected Glitches

The software industry has witnessed numerous high-profile incidents where error masking contributed to catastrophic failures. Financial trading platforms have experienced multi-million dollar losses when accumulated rounding errors reached critical thresholds. Healthcare systems have endangered patient safety when masked data synchronization issues culminated in incorrect medical records. Cloud infrastructure providers have suffered prolonged outages when monitoring systems failed to detect gradually degrading components.

These incidents share common characteristics: extended periods of apparent stability, sudden catastrophic failure, and post-mortem analyses revealing warning signs that went unnoticed. In retrospect, the errors were always present, but masking mechanisms prevented early detection and intervention.

The Financial Impact of Delayed Detection

Beyond operational disruptions, error masking carries significant financial consequences. Organizations spend considerably more resources responding to major incidents than they would preventing them through better error visibility. Emergency troubleshooting requires expensive specialist time, often during inconvenient hours. Customer compensation, reputation damage, and lost revenue compound these direct costs.

Additionally, technical debt accumulates faster when errors remain masked. Code bases become increasingly complex as developers add workarounds for symptoms rather than fixing root causes. This complexity creates a vicious cycle where new features introduce more potential failure points, further increasing the risk of masked errors reaching critical thresholds.

🛠️ Detection Strategies for Hidden Software Anomalies

Combating error masking requires deliberate architectural decisions and monitoring practices. Comprehensive logging represents the first line of defense—every exception, retry attempt, and fallback activation should generate observable events. However, logging alone isn’t sufficient; teams need structured approaches to analyzing these events and identifying patterns indicating masked problems.

Distributed tracing technologies have emerged as powerful tools for revealing error masking in microservices environments. By tracking requests across service boundaries and correlating timing information, these systems expose hidden failures and performance degradations that traditional monitoring misses. When combined with anomaly detection algorithms, distributed tracing can alert teams to increasing error rates before critical thresholds are reached.

Implementing Effective Circuit Breakers

Circuit breaker patterns offer a sophisticated approach to managing failures without masking them completely. Rather than endlessly retrying failed operations, circuit breakers detect persistent problems and fail fast, exposing issues to monitoring systems while preventing resource exhaustion. Properly configured circuit breakers include telemetry that tracks state transitions, providing visibility into when systems move between healthy, degraded, and failed states.

The key to effective circuit breakers lies in tuning thresholds appropriately. Too sensitive, and they trigger false alarms that erode confidence in monitoring systems. Too lenient, and they fail to prevent masked errors from accumulating. Organizations must invest time in understanding their specific failure modes and adjusting circuit breaker parameters based on empirical data.

Building Resilient Systems Without Masking Reality

True resilience doesn’t mean hiding problems—it means acknowledging failures while maintaining service continuity. This requires architectural patterns that embrace failure visibility. Chaos engineering practices, where teams deliberately introduce failures in production environments, help expose masked errors before they reach critical thresholds naturally.

Observability platforms that aggregate metrics, logs, and traces provide holistic views of system health. These platforms should surface not just binary success/failure states but gradual degradations, increasing latencies, and rising error rates. Dashboards designed around business outcomes rather than technical metrics help non-technical stakeholders understand when systems operate within acceptable parameters versus when hidden problems accumulate.

Cultural Shifts Toward Failure Transparency

Technology solutions alone cannot eliminate error masking—organizational culture must evolve to value transparency over superficial stability. Blameless post-mortems that analyze masked errors without punishing individuals create environments where teams proactively surface problems. Celebrating early detection of potential issues, even when they don’t cause customer impact, reinforces behaviors that prevent catastrophic failures.

Engineering leaders should establish metrics that track error visibility and resolution times for different severity levels. If teams consistently detect problems only after they reach critical thresholds, it indicates systemic issues with monitoring and error handling practices. Conversely, organizations that identify and address most errors while they remain minor demonstrate mature operational capabilities.

📊 Measuring and Monitoring System Health Accurately

Effective measurement requires looking beyond surface-level availability metrics. Service Level Indicators (SLIs) should capture not just whether requests succeed but also their quality—response times, data freshness, and operational costs. Error budgets that account for degraded performance, not just complete failures, provide more realistic assessments of system health.

| Metric Type | Traditional Approach | Error-Aware Approach |

|---|---|---|

| Availability | Binary up/down status | Gradual degradation levels |

| Error Rate | Visible failures only | Including masked retries |

| Performance | Average response time | P95, P99 with trend analysis |

| Resource Usage | Current utilization | Historical patterns and anomalies |

This expanded metric framework provides early warning systems for masked errors accumulating toward critical thresholds. When P99 latencies increase while averages remain stable, it often indicates growing problems affecting a subset of requests—precisely the scenario where error masking typically hides issues until widespread impact occurs.

🚀 Proactive Prevention Through Design Principles

Preventing error masking begins during system design, not as an afterthought during operations. Fail-fast principles encourage explicit error propagation rather than silent handling. APIs should return meaningful error responses that downstream services can interpret and act upon, rather than generic success indicators that hide upstream problems.

Immutable infrastructure and stateless services reduce opportunities for errors to accumulate undetected. When every deployment creates fresh instances without inherited state, many classes of masked errors—memory leaks, corrupted caches, gradually degrading processes—get automatically resolved through routine operations rather than accumulating toward failure.

Testing Strategies That Expose Hidden Failures

Comprehensive testing must include scenarios specifically designed to reveal error masking. Integration tests should inject faults at various system layers and verify that errors surface appropriately rather than disappearing into retry logic. Load testing should gradually increase traffic while monitoring for degradation patterns that indicate masked problems approaching thresholds.

Property-based testing and fuzzing techniques generate unexpected inputs that exercise error handling paths developers might not anticipate. These approaches often reveal cases where error handling code itself contains bugs, creating double-masking scenarios where failures in error detection logic hide the original problems.

The Path Forward: Embracing Transparent Failure Management

As software systems continue growing in complexity, the challenge of error masking will intensify without conscious effort to combat it. Organizations must recognize that apparent stability achieved through aggressive error suppression represents a liability, not an asset. The goal isn’t eliminating errors—which remains impossible in complex systems—but making them visible, understandable, and manageable before they reach critical thresholds.

Investment in observability infrastructure, chaos engineering practices, and engineering culture pays dividends by transforming how teams relate to failures. Rather than viewing errors as shameful events to hide, mature organizations treat them as valuable signals indicating opportunities for improvement. This mindset shift enables proactive problem resolution rather than reactive crisis management.

💡 Transforming Hidden Risks Into Visible Opportunities

The journey from error masking to error visibility requires sustained commitment across technical and organizational dimensions. Engineering teams must balance legitimate needs for fault tolerance with equally important requirements for problem detection. Monitoring systems should evolve beyond simple availability checks toward nuanced understanding of system behavior patterns. Most critically, cultures must reward transparency and early problem identification rather than punishing visible failures.

Organizations that successfully navigate this transformation discover competitive advantages in reliability, operational efficiency, and customer trust. Systems that explicitly acknowledge and manage errors prove more resilient than those that mask problems until catastrophic failures emerge. The hidden glitches that once lurked beneath layers of defensive programming become visible challenges that teams address systematically, preventing accumulation toward critical thresholds.

By unveiling hidden glitches before they escalate, software organizations transform potential disasters into manageable incidents, replacing reactive firefighting with proactive system stewardship. This shift doesn’t eliminate failures—it makes them opportunities for continuous improvement rather than existential threats.

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.