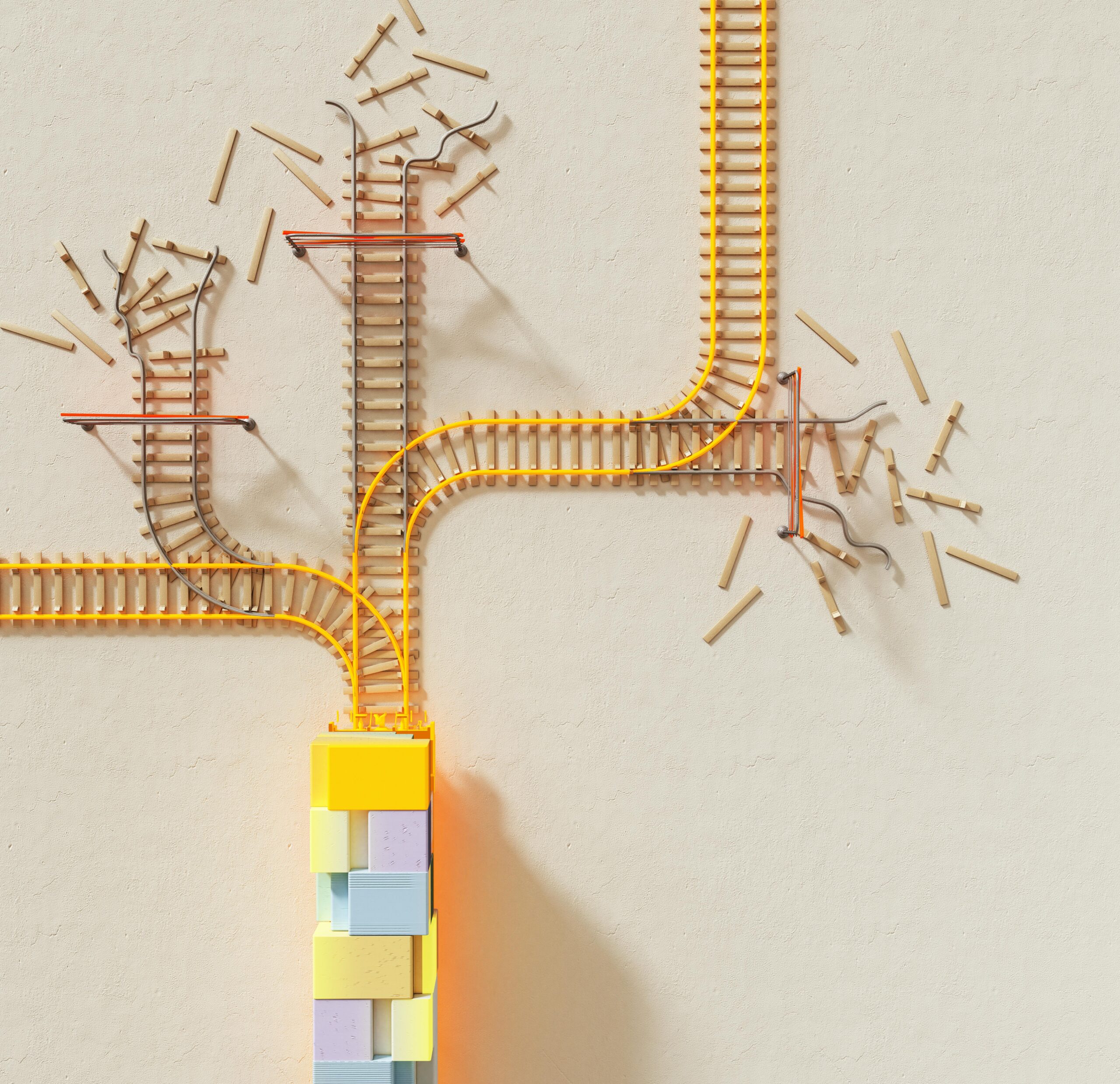

Precision errors tied to scale can silently undermine business performance, causing costly mistakes and missed opportunities that accumulate over time.

In today’s data-driven landscape, organizations face an unprecedented challenge: maintaining accuracy as operations expand exponentially. Scale-related errors represent one of the most overlooked yet critical threats to business success, affecting everything from financial reporting to customer satisfaction. These errors don’t just multiply—they compound, creating cascading effects that can destabilize entire systems.

Understanding how scale impacts precision isn’t merely an academic exercise. It’s a fundamental requirement for any organization aspiring to sustainable growth. Whether you’re managing inventory across multiple warehouses, processing millions of transactions, or coordinating global supply chains, the relationship between scale and accuracy demands your immediate attention.

🎯 The Hidden Cost of Scale-Related Inaccuracies

When businesses grow, their systems must process exponentially more information. A simple rounding error that costs pennies at small scale can translate into millions in losses when multiplied across vast operations. Financial institutions have learned this lesson painfully—fractional cent discrepancies across millions of transactions have resulted in regulatory fines and reputation damage.

Manufacturing facilities experience similar challenges. A measurement deviation of 0.1 millimeters might seem negligible for a single product, but when producing 100,000 units daily, that tiny variance can result in thousands of defective items, warranty claims, and customer dissatisfaction.

The psychology of scale-related errors makes them particularly dangerous. Because individual instances appear insignificant, teams often dismiss early warning signs until problems become catastrophic. This normalization of minor deviations creates a culture where precision gradually deteriorates.

Understanding the Mathematics Behind Scale Errors

Scale-related errors typically follow predictable mathematical patterns. Multiplicative errors grow proportionally with volume, while additive errors accumulate linearly. The distinction matters tremendously for error mitigation strategies.

Consider a percentage-based error of just 0.5%. At 100 transactions, this produces minimal impact. At 10,000 transactions, the cumulative effect becomes noticeable. At 1,000,000 transactions, it represents a crisis. This exponential relationship means that error tolerance must actually decrease as scale increases—counterintuitive but mathematically necessary.

Floating-point arithmetic presents another mathematical challenge. Computers represent decimal numbers with inherent limitations, causing precision loss in calculations. While programmers understand this conceptually, the practical implications at enterprise scale often catch organizations unprepared. Financial software must implement specialized decimal arithmetic libraries to avoid these pitfalls.

⚙️ Common Sources of Scale-Related Precision Problems

Identifying where scale-related errors originate is the first step toward eliminating them. Several categories account for the majority of precision problems in growing organizations.

Data Type Limitations and Overflow Issues

Software systems impose hard limits on number sizes. An integer field that accommodates thousands easily fails when encountering millions. Developers working with small datasets during testing may never discover these boundaries until production systems crash under real-world loads.

Date and timestamp precision creates similar issues. Systems tracking events to the second work perfectly until transaction volumes require millisecond or microsecond precision. Upgrading these fundamental data structures after deployment proves remarkably complex and expensive.

Measurement Instrument Calibration

Physical measurement tools require regular calibration, but calibration intervals often remain static even as usage intensity increases. A scale calibrated quarterly might suffice for 50 measurements daily but proves inadequate for 500. Wear, environmental factors, and systematic drift accelerate with increased usage.

IoT sensors distributed across large-scale operations face coordination challenges. Even when individually calibrated, ensuring synchronized accuracy across thousands of devices demands sophisticated validation systems that many organizations lack.

Human Error Amplification

Manual data entry errors remain stubbornly persistent. A single transposed digit causes minimal damage in isolation, but systematic entry errors across large datasets create statistical biases that distort analytics and decision-making. As operations scale, the absolute number of human touchpoints increases, multiplying error opportunities.

Training inconsistencies compound these problems. Standard operating procedures that work with a team of five become ambiguous when scaled to fifty employees across multiple shifts and locations. Interpretation variations introduce systematic errors disguised as random noise.

📊 Building Robust Error Detection Systems

Effective precision management requires proactive detection mechanisms that identify problems before they cascade. Waiting for errors to surface through customer complaints or financial discrepancies proves far too expensive.

Statistical process control adapted from manufacturing provides powerful tools for monitoring accuracy at scale. Control charts track key metrics over time, automatically flagging when measurements deviate beyond expected ranges. These systems distinguish between normal variation and genuine precision problems.

Automated reconciliation processes compare data across systems continuously. Discrepancies between inventory records and physical counts, or between subsidiary ledgers and general ledgers, signal precision issues requiring investigation. Modern reconciliation engines process millions of comparisons hourly, catching errors humans would miss.

Implementing Multi-Layer Validation

Single-point validation fails at scale. Robust systems employ multiple independent verification methods:

- Input validation: Verify data meets expected formats and ranges before processing

- Process validation: Confirm intermediate calculations produce logically consistent results

- Output validation: Check final results against independent sources or benchmark expectations

- Audit trails: Maintain detailed logs enabling retrospective error analysis

- Cross-system validation: Compare results across independent systems as mutual verification

This defense-in-depth approach ensures that errors escaping one validation layer get caught by subsequent checks. While implementing multiple validation stages requires investment, the cost proves trivial compared to large-scale accuracy failures.

🔧 Practical Strategies for Maintaining Precision at Scale

Theory alone doesn’t solve precision problems. Organizations need concrete strategies implementable within real-world constraints of budget, time, and existing infrastructure.

Architectural Approaches to Accuracy

System architecture profoundly influences precision capabilities. Microservices architectures enable isolated testing of individual components, making it easier to verify accuracy before integration. Monolithic systems hide precision problems within complex interdependencies.

Database design choices matter significantly. Using appropriate data types—decimal rather than floating-point for financial data, timestamp types supporting required precision levels—prevents entire categories of errors. These decisions made during initial development prove extraordinarily difficult to change later.

Redundancy and consensus mechanisms borrowed from distributed systems theory provide accuracy safeguards. Processing critical calculations through multiple independent paths and comparing results catches computational errors, hardware failures, and software bugs before they corrupt downstream systems.

Calibration and Maintenance Protocols

Establishing dynamic calibration schedules based on usage intensity rather than calendar time improves measurement accuracy. Sensors and instruments triggering automatic calibration requests after specific numbers of measurements or when self-diagnostics detect drift maintain precision automatically.

Documentation systems tracking every calibration event, including environmental conditions and technician notes, enable pattern analysis revealing systematic issues. This metadata often proves more valuable than the calibration itself for long-term accuracy improvement.

💡 Cultural Transformation for Precision Excellence

Technology alone cannot solve scale-related accuracy problems. Organizational culture must prioritize precision as a core value, not merely a technical requirement.

Leadership sets the tone by responding decisively when accuracy issues surface. Organizations that punish error reporting drive problems underground, while those rewarding transparency and rapid correction foster continuous improvement. Psychological safety enables frontline employees to flag concerns before they escalate.

Precision metrics should feature prominently in performance dashboards alongside traditional business metrics. What gets measured gets managed—making accuracy visible ensures it receives appropriate attention and resources.

Training and Knowledge Management

Comprehensive training programs scaled appropriately prevent human-introduced errors. But traditional training often fails at scale due to inconsistent delivery and retention problems. Modern approaches include:

- Microlearning modules addressing specific precision-critical tasks

- Simulation environments allowing practice without real-world consequences

- Just-in-time training delivered exactly when employees need specific knowledge

- Competency verification through practical assessments rather than passive knowledge tests

- Continuous refresher training automated based on error patterns

Knowledge management systems capturing tribal knowledge about common precision pitfalls prevent information loss during employee turnover. These systems should emphasize practical troubleshooting rather than theoretical understanding.

🚀 Leveraging Technology for Accuracy Enhancement

Emerging technologies offer unprecedented capabilities for maintaining precision at previously impossible scales. Artificial intelligence and machine learning detect subtle accuracy patterns humans cannot perceive.

Anomaly detection algorithms learn normal patterns in data streams, automatically flagging statistical outliers suggesting precision problems. These systems adapt continuously, becoming more sophisticated as they process additional data.

Blockchain technology provides immutable audit trails proving data provenance and preventing unauthorized modifications. While blockchain involves overhead, applications requiring absolute accuracy verification—pharmaceutical supply chains, financial transactions, legal records—justify the investment.

Digital twins—virtual replicas of physical systems—enable precision testing at scale without real-world risks. Manufacturers simulate millions of production runs, stress-testing measurement systems and identifying accuracy failure modes before physical implementation.

📈 Measuring and Improving Your Precision Performance

Systematic measurement reveals whether precision initiatives actually improve accuracy. Organizations should track several key performance indicators:

| Metric | Definition | Target |

|---|---|---|

| Error Rate | Percentage of transactions containing accuracy errors | < 0.1% |

| Mean Time to Detection | Average duration between error occurrence and identification | < 1 hour |

| Error Cost | Financial impact of accuracy failures per period | Decreasing trend |

| Correction Time | Average duration to resolve identified accuracy issues | < 4 hours |

| Repeat Error Rate | Percentage of errors representing previously solved problems | < 5% |

Benchmarking against industry standards provides context for internal metrics. While absolute accuracy requirements vary by sector, improvement trajectories should show consistent progress regardless of starting point.

Root cause analysis following significant accuracy failures prevents recurrence. Effective analysis goes beyond immediate causes to identify systemic factors enabling errors. Implementing corrective actions at the systemic level prevents entire categories of future problems.

🌐 Scaling Precision Across Global Operations

Multinational organizations face additional precision challenges stemming from geographic distribution. Time zones, currencies, measurement systems, and regulatory requirements all complicate accuracy maintenance.

Currency conversion introduces rounding errors that accumulate across millions of transactions. Sophisticated treasury management systems minimize these losses through netting, natural hedges, and optimal conversion timing, but small businesses often overlook these techniques.

Measurement system inconsistencies between metric and imperial units cause notorious errors. NASA’s Mars Climate Orbiter famously crashed due to unit conversion mistakes. Standardizing on single measurement systems wherever possible eliminates this error source entirely.

Regulatory compliance requirements vary internationally, sometimes demanding different precision levels for similar activities. Global companies must implement flexible systems supporting multiple accuracy standards simultaneously without cross-contamination.

Transforming Precision Challenges into Competitive Advantages

Organizations mastering scale-related accuracy don’t just avoid problems—they create differentiated value. Superior precision enables tighter margins, faster operations, and enhanced customer trust.

Manufacturing companies achieving exceptional measurement accuracy produce higher-quality products with less waste. This dual benefit—better output at lower cost—creates sustainable competitive moats difficult for rivals to replicate.

Financial services firms with superior transaction accuracy reduce reconciliation costs, accelerate reporting, and minimize regulatory exposure. These operational advantages translate directly to profitability improvements and strategic flexibility.

Retail operations maintaining precise inventory accuracy optimize stock levels, reducing both stockouts and excess inventory. The resulting cash flow improvements and customer satisfaction gains compound over time.

🎓 Building Organizational Expertise in Precision Management

Developing internal expertise ensures precision improvements sustain long-term. Relying exclusively on external consultants creates dependency without building institutional capability.

Establishing centers of excellence dedicated to accuracy brings together expertise from across the organization. These teams develop standards, provide consulting to business units, and drive continuous improvement initiatives. Cross-functional composition ensures practical solutions rather than theoretical ideals.

Certification programs for precision-critical roles ensure consistent competency. These programs should include both theoretical knowledge and practical assessments demonstrating real-world capability. Regular recertification maintains skills as technology and best practices evolve.

Creating communities of practice enables practitioners to share experiences and solutions. Whether through internal forums, regular meetings, or collaboration platforms, facilitating knowledge exchange accelerates organizational learning beyond what formal training alone achieves.

Future-Proofing Your Precision Capabilities

Technology and business environments continue evolving rapidly, requiring adaptable precision management approaches. Building flexibility into systems prevents obsolescence as requirements change.

Quantum computing promises revolutionary computational capabilities but introduces new precision considerations. Organizations should monitor quantum developments relevant to their industries, preparing for eventual integration even if practical applications remain years away.

Edge computing architectures push processing closer to data sources, enabling real-time accuracy verification. This distributed approach reduces latency and bandwidth requirements while improving precision through immediate validation.

Augmented reality systems assisting human workers in precision-critical tasks combine human judgment with computational accuracy. These hybrid approaches leverage complementary strengths while mitigating respective weaknesses.

The journey toward mastering precision at scale never truly ends. As organizations grow and technologies evolve, new accuracy challenges emerge requiring continued vigilance and innovation. However, companies establishing strong foundations in error detection, systematic improvement, and precision-oriented culture position themselves for sustainable success regardless of future uncertainties. The investment in accuracy capabilities pays compound returns, preventing costly failures while enabling competitive advantages that drive long-term prosperity. Scale and precision need not be opposing forces—with proper attention and systematic approaches, they become mutually reinforcing elements of organizational excellence.

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.