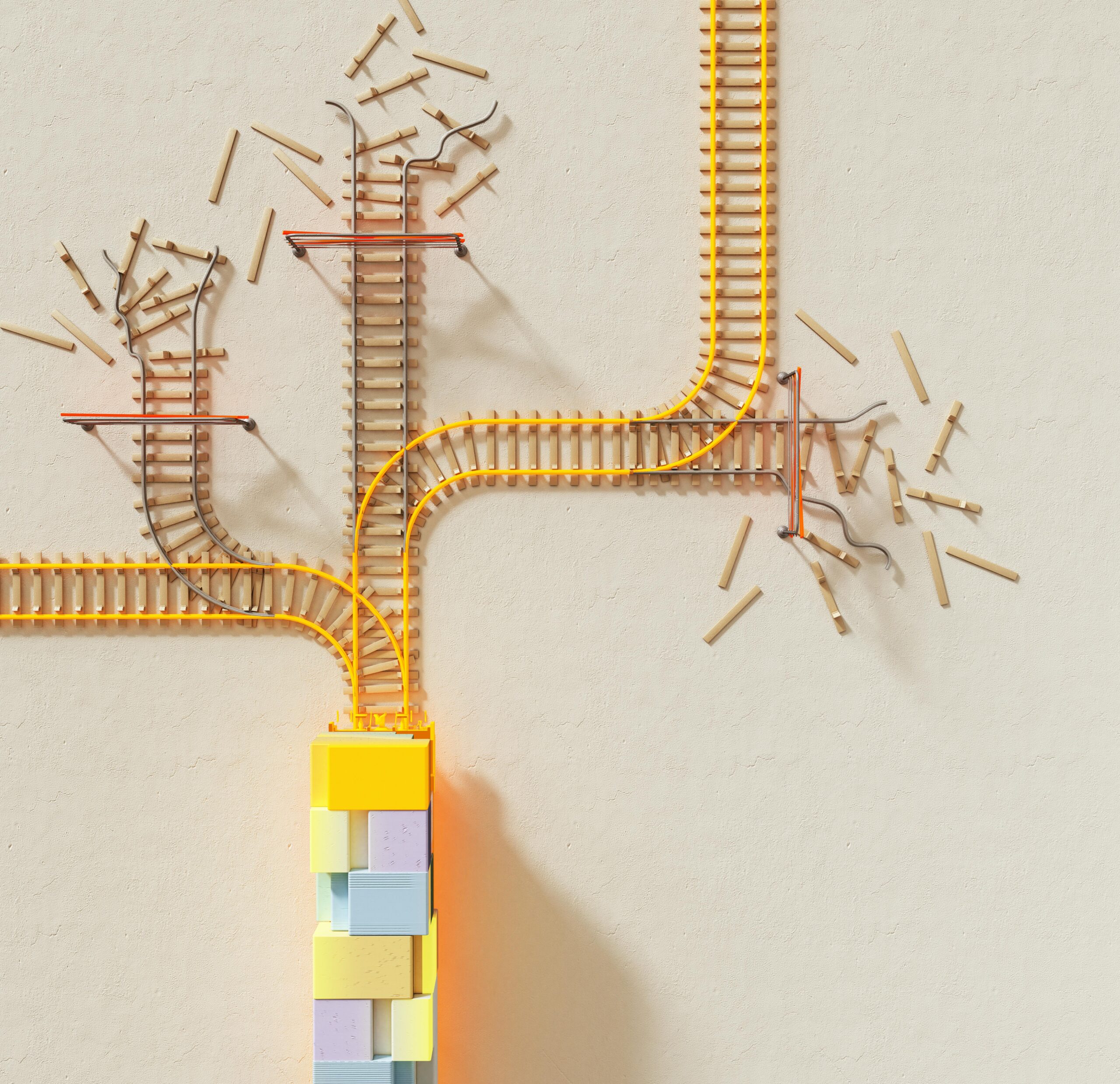

When systems fail, the culprit is often hiding in plain sight: reused code carrying hidden errors that multiply across your infrastructure faster than you can debug them.

🔄 The Hidden Cost of Copy-Paste Culture in Modern Development

Software development has always celebrated the principle of “Don’t Repeat Yourself” (DRY), encouraging developers to write code once and reuse it everywhere. While this approach promises efficiency and consistency, it harbors a dangerous side effect that most teams discover too late. When errors embed themselves in reusable components, they don’t just affect one system—they propagate across every application, service, and platform that depends on that code.

This phenomenon creates what experts call “error multiplication,” where a single flawed function, library, or design pattern becomes a vector for widespread system failures. The more we reuse, the more we risk amplifying our mistakes. Understanding this paradox is the first step toward building truly resilient systems that balance efficiency with reliability.

The software industry loses billions annually to bugs that originated in shared codebases, library dependencies, and template systems. These aren’t random errors—they’re systematic failures that spread through the very mechanisms we created to improve our workflow. The question isn’t whether to reuse code, but how to reuse it intelligently while preventing error replication from undermining your entire infrastructure.

🧬 Understanding the DNA of Error Replication

Error replication follows predictable patterns that mirror biological systems. Just as genetic mutations can spread through populations, coding errors propagate through software ecosystems with remarkable efficiency. The mechanism is deceptively simple: developers copy code snippets from Stack Overflow, clone repositories as project templates, or inherit from base classes without fully understanding their internal logic.

Each reuse event creates an opportunity for error amplification. A misconfigured security setting in a shared authentication library might expose hundreds of applications to vulnerabilities. An off-by-one error in a utility function could corrupt data across multiple databases. A race condition in a commonly imported module might cause intermittent failures that are nearly impossible to diagnose.

The replication cycle accelerates in environments that prioritize speed over scrutiny. Agile methodologies and rapid deployment schedules often pressure developers to implement working solutions quickly, discouraging the deep code reviews that might catch inherited errors. Microservices architectures, while offering scalability benefits, create numerous reuse touchpoints where errors can jump between services.

The Three Vectors of Error Transmission

Errors don’t spread randomly—they follow specific pathways through your development ecosystem. The first vector is direct code copying, where developers literally duplicate functions, classes, or entire modules without modification. This creates perfect clones of both functionality and flaws, distributing bugs with mathematical precision across your codebase.

The second vector operates through dependency chains. Modern applications rely on dozens or hundreds of external libraries, each with its own dependencies. A vulnerability in a popular package like Log4j demonstrates how quickly an error can cascade through this interconnected network, affecting millions of systems simultaneously.

The third vector is conceptual replication, where developers recreate flawed patterns they’ve learned from documentation, tutorials, or established practices. This is perhaps the most insidious form because the error lives in the mental model rather than the code itself, causing developers to independently generate the same mistakes across different projects.

💡 Recognizing the Warning Signs Before Disaster Strikes

Smart teams develop an instinct for spotting error replication before it metastasizes throughout their systems. The first red flag appears when multiple applications exhibit identical failure modes. If three different services crash with the same stack trace, you’re likely dealing with a shared component harboring a latent bug.

Another telltale sign emerges during code reviews when you experience déjà vu—that unsettling feeling that you’ve seen this exact bug before. Your intuition is correct: the error pattern has already appeared elsewhere in your codebase, indicating a common source that needs immediate attention.

Performance metrics can also reveal replication issues. When similar applications show consistent performance degradation at specific load thresholds, the problem likely originates in shared infrastructure or commonly used algorithms that don’t scale properly.

Building Your Error Detection Radar

Effective detection requires both technical tools and cultural practices. Static analysis tools can scan codebases for duplicate code segments, highlighting areas where errors might spread. These tools calculate metrics like code duplication percentage and cyclomatic complexity to identify high-risk reuse patterns.

Automated testing becomes crucial when dealing with reused components. Comprehensive test suites should exercise shared code through multiple usage scenarios, catching edge cases that might not appear in isolated testing. Integration tests specifically validate that components behave correctly when assembled into larger systems.

Cultural awareness matters equally. Teams should establish conventions for marking code that’s been copied or adapted from other sources, creating an audit trail that simplifies bug tracking. Regular architectural reviews help identify when reuse patterns are creating unhealthy dependencies or single points of failure.

🛠️ Architectural Strategies That Break the Replication Cycle

Breaking free from error replication requires deliberate architectural choices that prioritize resilience over convenience. The first strategy involves implementing strict interface contracts. When components communicate through well-defined interfaces with comprehensive validation, errors have fewer opportunities to cross system boundaries undetected.

Defensive programming becomes essential in shared components. Every function in a reusable library should validate its inputs, handle edge cases explicitly, and fail gracefully when encountering unexpected conditions. This defensive posture prevents upstream errors from cascading downstream through dependent systems.

Version pinning and controlled upgrades provide another layer of protection. Rather than automatically updating to the latest version of every dependency, establish a review process where updates are tested in isolated environments before deployment. This approach sacrifices some convenience but dramatically reduces the risk of inheriting new bugs through routine updates.

The Principle of Isolated Failure Domains

Modern resilient architectures embrace failure isolation as a core principle. When errors do occur in shared components, proper isolation ensures they affect only a limited subset of functionality rather than cascading throughout the entire system. Circuit breakers, bulkheads, and timeout mechanisms all contribute to this isolation strategy.

Microservices architectures, when properly implemented, naturally support failure isolation. Each service maintains its own resources and failure modes, preventing errors in one component from directly affecting others. However, this benefit only materializes when services are truly independent—shared databases or tightly coupled communication patterns can undermine isolation guarantees.

Containerization technologies enhance isolation by providing consistent runtime environments and resource limits. When each component runs in its own container with defined resource constraints, a memory leak or CPU spike in one service cannot starve others of necessary resources.

📊 Testing Strategies That Expose Hidden Replication Errors

Traditional testing approaches often miss replication errors because they focus on individual components in isolation. Discovering these errors requires testing strategies that specifically target the interactions between reused components and their consuming applications.

Contract testing validates that shared components maintain their promises across different usage contexts. Each consumer defines expectations about how a shared service or library should behave, and automated tests verify these expectations remain valid after every change. This approach catches breaking changes before they propagate through dependent systems.

Chaos engineering takes testing further by deliberately injecting failures into production-like environments. By randomly terminating services, corrupting data, or introducing latency, chaos experiments reveal how errors propagate through interconnected systems and whether isolation mechanisms function as designed.

The Power of Property-Based Testing

Property-based testing generates hundreds or thousands of random test cases to verify that code behaves correctly across a wide input space. This approach excels at finding edge cases that human testers might miss, particularly in mathematical or algorithmic code that gets reused across multiple contexts.

For shared components, property-based tests should verify invariants—conditions that must always hold regardless of how the component is used. An authentication library might verify that no sequence of operations can grant unauthorized access. A data validation module might ensure that no input can bypass sanitization rules.

Combining property-based testing with mutation testing creates a powerful error detection system. Mutation testing introduces small changes (mutations) into your code and verifies that test suites catch these artificial errors. High mutation coverage indicates that tests would likely catch real errors before they replicate through dependent systems.

🎯 Code Review Practices That Prevent Error Amplification

Human review remains irreplaceable for catching subtle errors that automated tools miss. However, standard code review practices need enhancement when dealing with reusable components. Reviewers should specifically evaluate how changes might affect downstream consumers, asking questions about backward compatibility and failure modes.

Establishing specialized review checklists for shared code raises the bar for quality. These checklists might include items like: Are all inputs validated? Do error messages provide enough context for debugging? Could this change break existing consumers? Have performance implications been measured?

Pair programming on shared components distributes knowledge and catches errors earlier. When two developers collaborate on creating or modifying reusable code, they bring different perspectives that help identify potential issues before they enter version control.

Documentation as Error Prevention

Comprehensive documentation does more than help developers use shared components—it prevents errors by making assumptions explicit. When documentation clearly states preconditions, postconditions, and expected error handling, developers are less likely to misuse components in ways that introduce bugs.

Living documentation that lives alongside code and updates automatically ensures accuracy. Tools that generate documentation from code comments, type annotations, and test cases create a single source of truth that reduces miscommunication and incorrect assumptions.

Decision records capture the reasoning behind architectural choices, helping future developers understand not just what the code does but why it does it that way. This context prevents well-intentioned “improvements” that actually introduce errors by violating unstated assumptions.

🚀 Building a Culture of Intelligent Reuse

Technology alone cannot solve error replication—organizational culture plays an equally important role. Teams must develop shared understanding about when reuse makes sense and when custom implementation reduces risk. Not everything deserves abstraction into reusable components.

The “rule of three” provides useful guidance: wait until you’ve implemented similar functionality three times before extracting it into a shared component. This patience allows patterns to emerge naturally and ensures that abstractions serve real needs rather than imagined future requirements.

Creating centers of excellence around critical shared components focuses expertise where it matters most. Rather than treating every piece of code equally, identify the components whose failure would cause disproportionate impact and assign your best engineers to maintain them.

Metrics That Matter for Reuse Quality

Measuring the right metrics helps teams optimize reuse strategies over time. Code duplication percentage indicates how much redundancy exists, but it shouldn’t approach zero—some duplication protects against error replication. The optimal range typically falls between 5-15%, balancing efficiency with isolation.

Dependency depth reveals how many layers of reused components stack atop each other. Deep dependency chains increase brittleness and make debugging difficult. Keeping dependencies relatively flat reduces the probability that errors will propagate through multiple layers.

Mean time to resolution for bugs in shared components provides insight into reuse effectiveness. If shared components consistently take longer to debug than isolated code, the reuse strategy may need adjustment. Ideally, shared components should be easier to fix because they receive more scrutiny and have better test coverage.

🔐 Security Implications of Error Replication

Security vulnerabilities represent the most dangerous form of replication error. A single security flaw in a widely-used authentication library or data validation function can expose hundreds of applications to attack. The 2021 Log4Shell vulnerability demonstrated this risk at unprecedented scale.

Security-focused code review must be mandatory for all shared components that handle sensitive data, authentication, authorization, or network communication. These reviews should involve specialists who understand common vulnerability patterns and can spot subtle security flaws.

Implementing security scanning in continuous integration pipelines catches known vulnerabilities in dependencies before they reach production. Tools like dependency checkers compare your libraries against vulnerability databases, alerting teams when updates contain security fixes.

The Supply Chain Security Challenge

Modern applications depend on thousands of third-party packages, creating an extensive supply chain that attackers increasingly target. Malicious actors compromise popular packages to inject malware that spreads to every application using that dependency.

Protecting against supply chain attacks requires verification mechanisms like package signing, checksum validation, and source code auditing for critical dependencies. Some teams maintain private mirrors of external packages, scanning and approving versions before making them available to developers.

Zero-trust principles apply to dependencies as well as users. Even reputable packages should run with minimal privileges in sandboxed environments that limit the damage possible if they become compromised.

🌟 Moving Forward: Your Action Plan for Error-Resistant Systems

Transforming your development practices to prevent error replication starts with assessment. Audit your current codebase to identify the most widely-reused components and evaluate their quality, test coverage, and documentation. These high-leverage areas deserve immediate attention.

Establish governance policies for creating and modifying shared components. Require additional review, testing, and documentation for code that will be reused across multiple projects. Create templates that guide developers toward best practices when building reusable components.

Invest in automated tooling that supports intelligent reuse. Static analysis, comprehensive test automation, and continuous monitoring work together to catch errors early and prevent their propagation. These tools pay for themselves by reducing debugging time and preventing production incidents.

Most importantly, foster a learning culture where errors become opportunities for systematic improvement. When replication errors occur, conduct blameless postmortems that identify not just the immediate bug but the process failures that allowed it to spread. Use these insights to strengthen your development practices continuously.

The path to error-resistant systems requires balancing competing priorities: efficiency versus isolation, speed versus thoroughness, reuse versus redundancy. There are no perfect answers, but conscious decision-making informed by understanding error replication mechanics will guide you toward increasingly resilient architectures. Your systems may never be completely error-free, but they can become dramatically more robust when you break the cycle of blind reuse and embrace intelligent, intentional sharing of code that’s truly worthy of replication. 🎯

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.