Complex systems surround us daily, from power grids to financial networks, and when one component fails, the domino effect can be catastrophic and rapid.

🔗 The Anatomy of Cascading Failures in Modern Systems

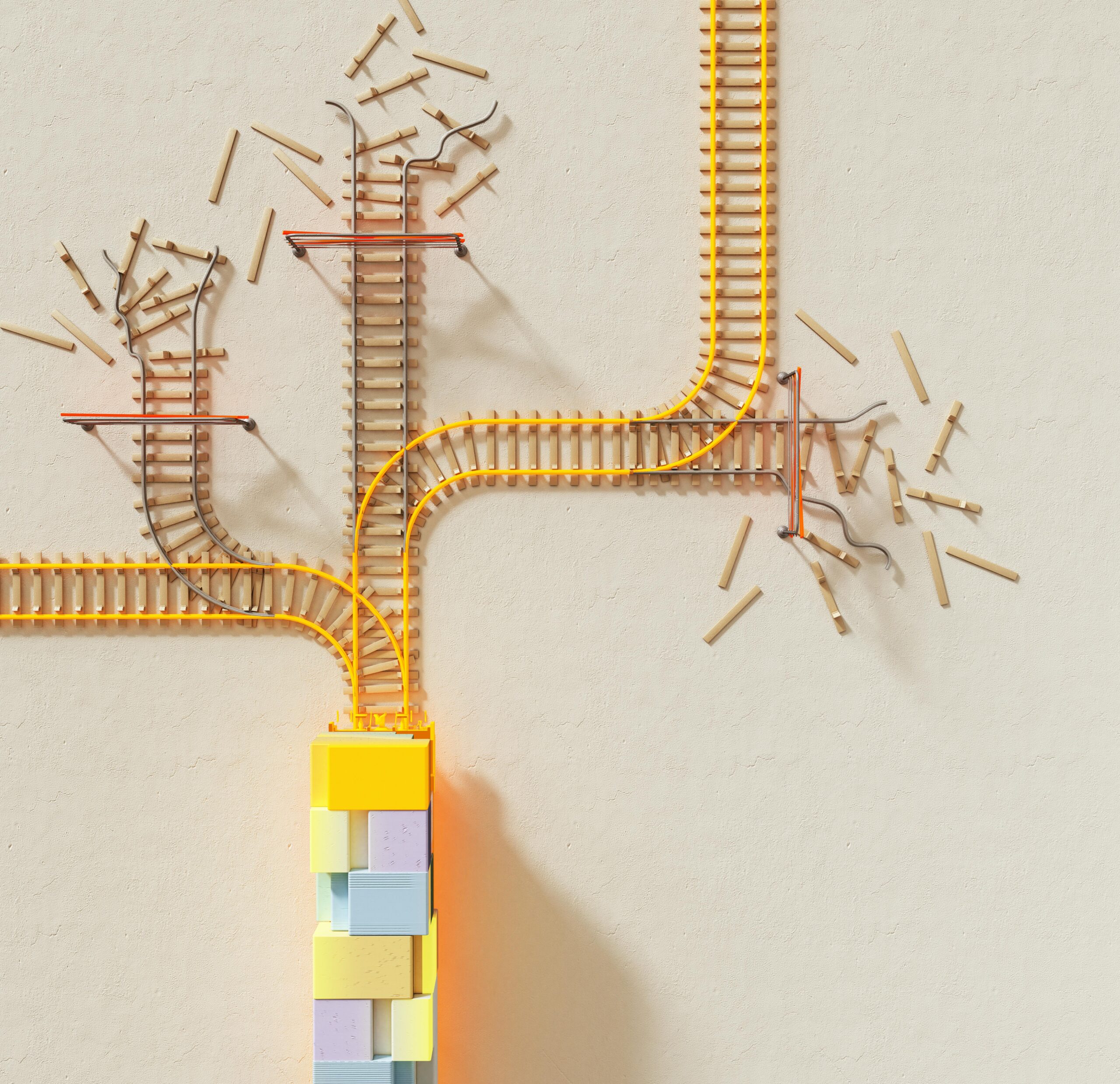

Cascading failures represent one of the most challenging phenomena in complex systems engineering. These chain reactions occur when a single point of failure triggers subsequent failures throughout an interconnected network, creating a domino effect that can rapidly spiral out of control. Understanding this mechanism is crucial in our increasingly interconnected world, where systems ranging from electrical grids to social media platforms depend on intricate webs of dependencies.

The fundamental characteristic of cascading failures lies in their non-linear progression. A minor disruption in one area can amplify exponentially as it propagates through the system, often producing consequences far more severe than the initial trigger would suggest. This phenomenon challenges traditional linear thinking and requires a holistic approach to system design and risk management.

Modern infrastructure has become so interconnected that the failure of a single component can have far-reaching implications across multiple sectors. Power distribution networks, communication systems, financial markets, and supply chains all exhibit this vulnerability. The 2003 Northeast Blackout, which affected 50 million people across the United States and Canada, exemplifies how a software bug in an alarm system cascaded into one of the largest power failures in North American history.

⚡ Real-World Examples That Changed How We Think About System Resilience

History provides numerous sobering examples of cascading failures. The 2008 financial crisis demonstrated how interconnected financial institutions could create systemic risk. When Lehman Brothers collapsed, the shockwaves rippled through global markets, freezing credit systems and triggering a worldwide recession. This wasn’t simply one bank failing—it was a cascade of interconnected failures across institutions that were deemed “too big to fail.”

In technological infrastructure, the 2017 Amazon Web Services outage showcased the vulnerability of cloud-dependent systems. A simple typo during routine maintenance caused a major S3 storage service disruption, which cascaded to affect thousands of websites and services that relied on AWS infrastructure. Companies lost millions of dollars in mere hours, and millions of users found themselves unable to access essential services.

The COVID-19 pandemic revealed cascading failures in global supply chains with unprecedented clarity. Factory closures in one region led to parts shortages globally, which prevented manufacturers from producing finished goods, creating inventory shortages, disrupting logistics networks, and ultimately impacting consumer markets worldwide. The semiconductor shortage that followed demonstrated how specialized components manufactured in concentrated geographic areas could create bottlenecks affecting everything from automobiles to consumer electronics.

Transportation Networks and Their Vulnerability Points

Transportation systems provide another compelling case study. When the Ever Given container ship blocked the Suez Canal in 2021, the cascading effects were immediate and severe. The blockage disrupted 12% of global trade, created backlogs of hundreds of ships, delayed critical supplies, and caused billions in economic losses. This single incident highlighted how critical chokepoints in global infrastructure can create widespread cascading failures.

🧠 The Science Behind Chain Reactions in Complex Systems

Understanding cascading failures requires grasping several key concepts from network theory and complexity science. Complex systems exhibit emergent properties that cannot be predicted by examining individual components in isolation. These systems are characterized by high connectivity, feedback loops, and non-linear dynamics that make them particularly susceptible to cascading failures.

Network topology plays a crucial role in determining cascade vulnerability. Scale-free networks, which characterize many real-world systems, contain hub nodes with many connections and numerous peripheral nodes with few connections. While this structure provides efficiency under normal conditions, it creates significant vulnerability—targeting or failing a hub node can cascade rapidly throughout the entire network.

Feedback loops amplify cascading failures. Positive feedback loops accelerate the cascade, while negative feedback loops can potentially dampen it. In electrical grids, when one transmission line fails, the load redistributes to neighboring lines. If those lines become overloaded and fail, the cascade accelerates. Each failure increases the burden on remaining components, creating a positive feedback loop that can lead to total system collapse.

Critical Thresholds and Tipping Points

Many complex systems exhibit critical thresholds beyond which cascading failures become inevitable. Below these thresholds, the system can absorb shocks and recover. Beyond them, small perturbations trigger large-scale failures. Identifying these thresholds remains one of the greatest challenges in system design and management.

The concept of “normal accidents” introduced by sociologist Charles Perrow suggests that in systems with high complexity and tight coupling, cascading failures are not aberrations but inevitable outcomes. Tight coupling means that processes happen quickly with little slack, leaving minimal time for intervention. High complexity means that system behaviors are not always predictable or observable.

🛡️ Prevention Strategies: Building Resilient Systems

Preventing cascading failures requires multi-layered strategies that address system design, monitoring, and response capabilities. Redundancy represents the most fundamental approach—creating backup systems and alternative pathways ensures that single point failures don’t propagate. However, redundancy alone isn’t sufficient, as redundant systems can share common vulnerabilities or fail simultaneously under certain conditions.

Modularity and compartmentalization create barriers to cascade propagation. By designing systems with clearly defined boundaries and limited interconnections between modules, failures can be contained within specific sections. This principle applies across domains, from software architecture with microservices to financial regulations requiring separation between banking activities.

Diversity in system components provides protection against common-mode failures. If all components share the same vulnerabilities—using identical software, hardware, or processes—a single exploit can trigger widespread failure. Introducing diversity means that different components fail under different conditions, reducing cascade likelihood.

Early Warning Systems and Monitoring

Advanced monitoring systems enable early detection of conditions that might trigger cascades. Real-time data analysis, anomaly detection algorithms, and predictive modeling can identify precursors to cascading failures, providing opportunities for preventive intervention. Machine learning models trained on historical failure data can recognize patterns that human operators might miss.

Creating meaningful indicators and dashboards helps operators understand system health at a glance. Key performance indicators should focus not just on individual component status but on system-level metrics that reflect overall resilience and proximity to critical thresholds. Stress testing and simulation exercises help organizations prepare for cascading failure scenarios before they occur in reality.

🔧 Response Protocols When Prevention Fails

Despite best prevention efforts, cascading failures sometimes occur. Effective response protocols can limit damage and accelerate recovery. Rapid isolation of failing components prevents cascade propagation—circuit breakers in electrical systems and trading halts in financial markets exemplify this principle. Automated systems can respond faster than human operators, but they must be carefully designed to avoid triggering false positives that cause unnecessary disruptions.

Graceful degradation allows systems to maintain core functionality even when components fail. Rather than complete collapse, systems should prioritize critical functions and shed less essential operations. Airlines implement this principle when weather disruptions occur—they prioritize safety-critical communications and operations while delaying non-essential services.

Communication protocols during cascading failures prove critical. Clear, timely information sharing between stakeholders enables coordinated response. The absence of effective communication often exacerbates cascades, as different parts of the system respond based on incomplete information, potentially working at cross-purposes.

Learning from Near-Misses and Close Calls

Organizations often focus on analyzing major failures while overlooking near-misses that didn’t cascade into full crises. These close calls provide invaluable learning opportunities without the associated costs. Establishing reporting systems that encourage documentation of near-misses without blame creates a learning culture that improves system resilience over time.

💡 Cross-Sector Applications and Universal Principles

The principles of preventing cascading failures apply remarkably consistently across diverse domains. Healthcare systems face cascading failures when emergency departments become overwhelmed, leading to ambulance diversions, delayed treatments, and increased mortality. Applying network theory and capacity planning can help health systems build resilience against these cascades.

In cybersecurity, cascading failures occur when one compromised system provides access to others, allowing attackers to move laterally through networks. Zero-trust architecture, network segmentation, and principle of least privilege all represent strategies to prevent security breach cascades. The SolarWinds attack demonstrated how supply chain compromises can cascade to affect thousands of downstream organizations.

Social media platforms experience cascading failures of a different nature—information cascades where misinformation spreads rapidly through networks. Understanding these dynamics requires similar analytical frameworks as physical system cascades, though the mechanisms differ. Content moderation strategies attempt to interrupt these cascades before they reach critical mass.

Environmental and Ecological Systems

Ecological systems demonstrate cascading failures through trophic cascades, where changes at one level of the food web cascade through the entire ecosystem. The removal of apex predators can trigger cascading changes that fundamentally alter ecosystem structure. Conservation strategies increasingly recognize the need for system-level thinking rather than single-species focus.

Climate change represents a meta-level cascade risk, where warming temperatures trigger feedback loops—melting permafrost releases methane, which accelerates warming, which melts more permafrost. Understanding and interrupting these cascades represents one of humanity’s greatest challenges. The interconnections between climate systems, agricultural systems, economic systems, and social systems create the potential for civilization-scale cascading failures.

🚀 Future Challenges and Emerging Technologies

As systems become more complex and interconnected, cascading failure risks evolve. The Internet of Things creates vast networks of interconnected devices, each potentially representing a vulnerability point. Autonomous systems and artificial intelligence introduce new dynamics—algorithm-driven trading can trigger financial cascades at machine speed, faster than human intervention can manage.

Smart cities represent a convergence of multiple complex systems—power, water, transportation, communication—all digitally interconnected. This integration creates efficiency gains but also unprecedented cascade risks. A cyber-attack could potentially cascade across multiple infrastructure systems simultaneously, creating compounding crises.

Blockchain and distributed systems promise some resilience benefits through decentralization, reducing single points of failure. However, they introduce new vulnerabilities through code dependencies and consensus mechanisms. The complexity of these systems makes predicting cascade pathways increasingly difficult.

Artificial Intelligence as Both Solution and Risk

AI systems offer powerful tools for predicting and preventing cascading failures through pattern recognition and real-time optimization. However, they also introduce risks—adversarial attacks, algorithmic biases, and opacity in decision-making processes. As AI systems become more deeply embedded in critical infrastructure, understanding their failure modes becomes essential.

The integration of multiple AI systems creates the potential for algorithmic cascades where automated decisions trigger responses in other systems, creating feedback loops that humans struggle to comprehend or interrupt. Flash crashes in financial markets provide glimpses of this future, where algorithmic trading systems interact in unexpected ways, creating rapid cascades.

🌐 Building a Culture of Resilience

Technical solutions alone cannot prevent cascading failures. Organizational culture, training, and mindset play equally important roles. High-reliability organizations in fields like aviation and nuclear power demonstrate that sustained attention to safety, continuous learning, and healthy respect for complexity can maintain remarkable safety records despite operating high-risk systems.

Psychological safety enables team members to report concerns, near-misses, and potential vulnerabilities without fear of punishment. Organizations that punish messengers of bad news create conditions where small problems remain hidden until they cascade into crises. Transparency, open communication, and learning orientation build resilience at the human level that complements technical safeguards.

Cross-disciplinary collaboration brings diverse perspectives to resilience challenges. Engineers, social scientists, designers, and domain experts each contribute unique insights. Complex systems require complex teams with varied expertise working together to understand risks and design robust solutions.

The challenge of cascading failures in complex systems will only intensify as our world becomes more interconnected. Understanding the mechanisms that drive these chain reactions—from network topology to feedback loops to critical thresholds—provides the foundation for designing more resilient systems. Combining technical solutions like redundancy and modularity with organizational practices like continuous monitoring and learning cultures offers the best path forward.

No system can be made completely immune to cascading failures, but thoughtful design, vigilant monitoring, and effective response protocols can dramatically reduce their frequency and severity. As we continue building increasingly complex and interconnected systems, the principles of resilience engineering must move from specialized knowledge to fundamental design requirements. The cost of cascading failures—measured in economic losses, disrupted services, and sometimes human lives—demands nothing less than our sustained attention and best efforts to understand and prevent these unstoppable chain reactions.

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.