When a single component fails, entire systems can collapse. Understanding this vulnerability is essential for building resilience in today’s interconnected digital infrastructure.

🔗 The Hidden Vulnerability in Modern Systems

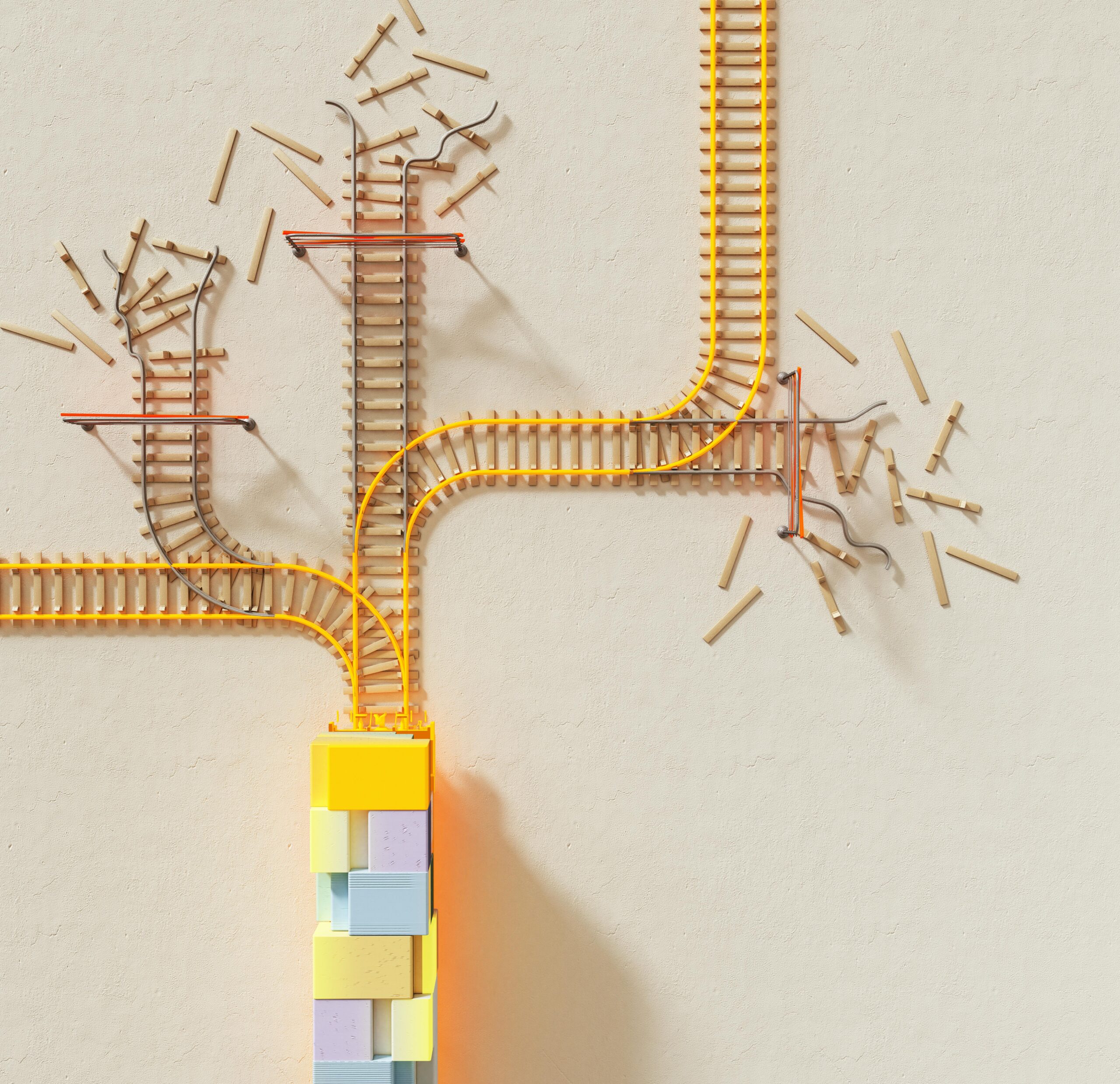

In our increasingly interconnected world, systems have become more complex and interdependent than ever before. From financial networks to power grids, healthcare systems to cloud computing platforms, we rely on intricate webs of technology that operate seamlessly—until they don’t. The concept of single-point failures represents one of the most critical vulnerabilities in modern infrastructure, where the breakdown of one seemingly minor component can trigger cascading effects that bring entire systems to their knees.

Single-point failures occur when a system lacks redundancy at a critical juncture. Think of it as a bridge with only one support pillar—if that pillar collapses, the entire structure fails. In technological and organizational contexts, these vulnerabilities exist wherever there’s insufficient backup, no alternative pathway, or inadequate failover mechanisms. The consequences can range from minor inconveniences to catastrophic disasters affecting millions of people.

What makes these failures particularly dangerous is their amplifying nature. A small glitch in one area doesn’t simply stop at its source—it propagates through connected systems, gaining momentum and impact as it spreads. This phenomenon has been observed repeatedly throughout history, from the 2003 Northeast Blackout that affected 50 million people to the 2021 Facebook outage that disrupted services for billions of users worldwide.

⚡ The Mechanics of Cascade Failures

Understanding how single-point failures escalate into widespread catastrophes requires examining the underlying mechanics of cascade effects. These failures typically progress through several predictable stages, each amplifying the damage of the previous one.

Initially, a triggering event occurs at a vulnerable point in the system. This could be a hardware malfunction, software bug, human error, or external attack. The immediate impact might seem manageable and localized. However, because modern systems are tightly coupled, this initial failure quickly affects dependent components.

As the failure propagates, it encounters other systems that rely on the compromised component. These secondary systems, designed with the assumption that primary systems will function normally, suddenly face unexpected conditions. Without proper error handling or graceful degradation mechanisms, they begin failing as well. This creates a domino effect where each failure triggers additional failures in exponentially increasing numbers.

The Speed Factor in System Breakdowns

One of the most challenging aspects of cascade failures is their velocity. In digital systems, failures can propagate at electronic speeds, jumping from one component to another in milliseconds. This rapid progression often outpaces human response capabilities, making manual intervention difficult or impossible during the critical early stages.

The 2010 Flash Crash exemplifies this phenomenon. In just 36 minutes, the Dow Jones Industrial Average plummeted nearly 1,000 points, wiping out approximately $1 trillion in market value. Automated trading systems, reacting to initial price movements, triggered cascading sell orders that overwhelmed the market. While the market eventually recovered, the incident demonstrated how quickly interconnected automated systems can amplify initial disturbances into massive disruptions.

🏢 Real-World Examples of Catastrophic Cascades

History provides numerous sobering examples of how single-point failures have resulted in widespread system breakdowns. These case studies offer valuable lessons about vulnerability, interdependence, and the importance of resilience engineering.

The 2017 Amazon Web Services Outage

In February 2017, a simple typo during a routine debugging procedure took down a significant portion of Amazon’s S3 cloud storage service. An engineer executing a command intended to remove a small number of servers accidentally removed substantially more capacity than intended. This seemingly minor error cascaded into a four-hour outage affecting thousands of websites and services that depended on AWS infrastructure.

The incident highlighted how centralized cloud services, while offering efficiency and scalability, create single points of failure for vast ecosystems of dependent applications. Companies like Slack, Trello, and countless others found their services completely disrupted because they relied on the affected AWS region without adequate multi-region redundancy.

The Colonial Pipeline Ransomware Attack

In May 2021, a ransomware attack on Colonial Pipeline’s IT systems led the company to proactively shut down its operational technology systems as a precautionary measure. This single attack on one pipeline operator triggered fuel shortages across the Eastern United States, panic buying, price spikes, and even the declaration of a state of emergency.

What made this incident particularly instructive was that the operational pipeline systems themselves weren’t compromised—the company shut them down preventatively because of inadequate segmentation between IT and operational technology networks. This demonstrated how the mere potential for cascade failure can itself become a triggering factor for widespread disruption.

🧠 Psychological and Organizational Amplifiers

While technical factors drive many cascade failures, psychological and organizational elements often amplify their severity. Human responses to system failures can either mitigate damage or accelerate breakdown, depending on preparation, training, and decision-making under pressure.

During crisis situations, cognitive biases and stress responses can impair decision-making. The urgency to restore systems quickly may lead to hasty interventions that worsen the situation. Additionally, organizational structures that lack clear protocols for emergency response often experience paralysis or conflicting directives that prevent effective coordinated action.

The Communication Breakdown Problem

Cascade failures frequently intensify due to communication breakdowns within organizations. When systems fail, the very communication channels needed to coordinate response efforts may themselves be compromised. Teams working in silos may not share critical information promptly, or they may receive contradictory instructions from different management levels.

The lack of established communication protocols during emergencies means that valuable time is lost as teams attempt to understand what’s happening, who’s responsible for what, and what actions should be taken. This organizational confusion allows technical failures to spread unchecked while human responders struggle to coordinate an effective response.

🛡️ Identifying Vulnerable Single Points in Your Systems

Preventing catastrophic cascade failures begins with systematically identifying single points of failure within your systems. This requires a comprehensive approach that examines technical infrastructure, processes, personnel, and external dependencies.

Start by mapping your system architecture in detail. Document all components, connections, and dependencies. Pay special attention to elements that, if removed, would render the system inoperable. These critical components warrant particular scrutiny and redundancy planning.

Consider dependencies beyond your immediate control. Third-party services, vendors, utility providers, and infrastructure partners all represent potential single points of failure. A comprehensive risk assessment must account for these external factors and develop contingency plans for their unavailability.

The Dependency Mapping Process

Effective dependency mapping involves creating visual representations of system relationships. This includes:

- Identifying all system components and their functions

- Documenting connections and data flows between components

- Noting criticality levels for each component

- Highlighting components without redundancy

- Tracking external dependencies and service level agreements

- Assessing potential failure modes for each component

This mapping exercise often reveals surprising vulnerabilities. Components that seemed inconsequential may prove critical when examined in the context of broader system dependencies. The goal is to gain comprehensive visibility into your infrastructure’s resilience profile.

🔧 Engineering Resilience Into Complex Systems

Once vulnerabilities are identified, the next step involves implementing resilience measures that prevent single-point failures from triggering cascade effects. This requires both technical solutions and organizational practices that prioritize robustness over pure efficiency.

Redundancy represents the most fundamental resilience strategy. Critical components should have backup systems ready to assume functionality if primary systems fail. This includes hardware redundancy, data replication, and failover mechanisms that activate automatically when problems are detected.

However, effective redundancy goes beyond simple duplication. True resilience requires diversity—using different technologies, vendors, or approaches for backup systems. This prevents common-mode failures where a single bug, vulnerability, or design flaw affects both primary and backup systems simultaneously.

Building Graceful Degradation Capabilities

Not every component can be fully redundant, making graceful degradation an essential resilience pattern. Systems designed with graceful degradation continue operating at reduced capacity when components fail, rather than experiencing complete breakdown.

For example, an e-commerce platform might disable personalized recommendations during a database failure while maintaining core purchasing functionality. This approach prioritizes critical functions and allows systems to continue providing value even under adverse conditions.

Implementing graceful degradation requires careful prioritization of system functions and designing fallback behaviors for various failure scenarios. It demands rigorous testing to ensure degraded modes actually work as intended when needed.

📊 Monitoring and Early Warning Systems

Preventing cascade failures requires detecting problems before they spiral out of control. Comprehensive monitoring systems serve as early warning mechanisms, alerting teams to anomalies that might indicate emerging issues.

Effective monitoring extends beyond simple uptime checks. It should track performance metrics, error rates, resource utilization, and system dependencies in real-time. Sophisticated monitoring solutions use anomaly detection algorithms to identify unusual patterns that might escape human observation.

When monitoring systems detect potential issues, they should trigger automated responses where appropriate and alert human operators with clear, actionable information. The goal is to compress the time between problem emergence and corrective action, preventing small issues from amplifying into major incidents.

The Role of Chaos Engineering

Chaos engineering represents a proactive approach to resilience testing. Rather than waiting for failures to occur naturally, teams intentionally introduce controlled failures into systems to observe their behavior and identify weaknesses.

This practice, popularized by companies like Netflix, involves systematically testing how systems respond to various failure scenarios. By deliberately causing component failures, network disruptions, or resource constraints in controlled environments, organizations can identify cascade vulnerabilities before they manifest in production.

🎯 Strategic Decision-Making Under Pressure

When cascade failures occur despite preventive measures, effective response depends on clear decision-making frameworks and practiced crisis management protocols. Organizations that successfully navigate catastrophic failures share common characteristics in their approach to crisis response.

First, they maintain clear decision authority and escalation paths. Everyone knows who’s authorized to make critical decisions during emergencies, preventing dangerous delays caused by uncertainty about authority. Second, they’ve practiced failure scenarios through tabletop exercises and drills, building institutional muscle memory for crisis response.

Third, effective organizations prioritize stabilization over rapid restoration. While the pressure to restore normal operations quickly is intense, hasty interventions often worsen situations. Successful crisis response focuses on stopping the cascade, establishing a stable baseline, and then methodically working toward full restoration.

💡 Learning From Failure: Post-Incident Analysis

Every cascade failure, however painful, represents a learning opportunity. Organizations that treat incidents as chances to improve their resilience emerge stronger and more prepared for future challenges.

Effective post-incident analysis goes beyond identifying immediate causes. It examines contributing factors, systemic weaknesses, and organizational dynamics that allowed a single-point failure to cascade. This requires creating a blameless culture where honest discussion about failures is encouraged rather than punished.

The analysis should result in concrete action items that address identified vulnerabilities. These might include technical improvements, process changes, additional training, or revised protocols. Critically, organizations must follow through on implementing these improvements rather than allowing recommendations to languish unaddressed.

🌐 The Future of Resilience in Interconnected Systems

As systems grow more complex and interconnected, the challenge of preventing cascade failures intensifies. Artificial intelligence, Internet of Things devices, and edge computing create new dependency webs that introduce novel vulnerabilities alongside their benefits.

Future resilience strategies will likely rely increasingly on AI-powered monitoring and response systems capable of detecting and mitigating cascade failures at electronic speeds. Machine learning models can identify subtle patterns indicating emerging problems and potentially trigger protective responses faster than human operators.

However, this increased automation introduces new risks. Systems that respond autonomously to detected threats might themselves trigger cascade failures through rapid, synchronized actions. Balancing automated response capabilities with appropriate human oversight will remain a critical challenge.

🚀 Building a Culture of Resilience

Ultimately, preventing catastrophic cascade failures requires more than technical solutions—it demands cultivating an organizational culture that prioritizes resilience, learns from failures, and continuously improves systems based on operational experience.

This culture manifests in several ways. It means resisting the temptation to optimize purely for efficiency at the expense of redundancy and robustness. It involves investing in unglamorous but critical infrastructure improvements that may never be visibly utilized but provide essential protection when needed.

A resilience culture also embraces transparency about failures and near-misses. Rather than hiding incidents or punishing those involved, resilient organizations share lessons learned widely, contributing to collective knowledge about vulnerabilities and effective mitigation strategies.

As our reliance on complex, interconnected systems continues growing, understanding how single-point failures amplify into catastrophic breakdowns becomes ever more critical. By identifying vulnerabilities, implementing robust resilience measures, maintaining vigilant monitoring, and cultivating organizational cultures that prioritize reliability, we can build systems capable of withstanding inevitable failures without cascading into widespread catastrophe.

The path forward requires acknowledging that perfect reliability is impossible. Systems will fail. The question is whether those failures remain contained and manageable or amplify into disasters that affect millions. Through thoughtful design, proactive testing, comprehensive monitoring, and continuous learning, we can tip the balance toward resilience, creating infrastructure that bends under pressure rather than breaking catastrophically. 🎯

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.