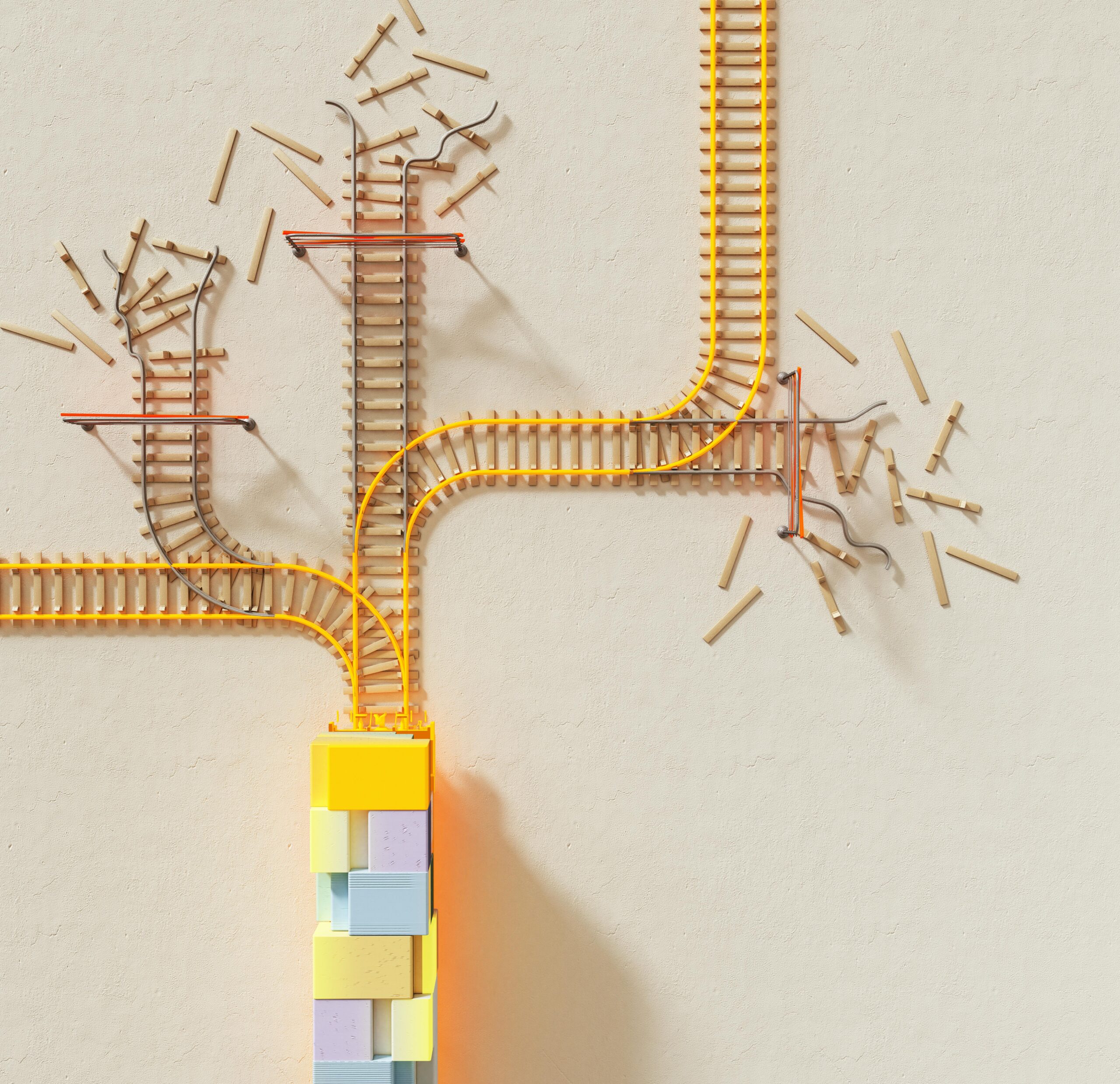

Data inaccuracies at the source create cascading failures throughout organizations, undermining decision-making processes and multiplying operational risks in ways that often remain invisible until significant damage occurs.

In today’s data-driven business landscape, organizations rely heavily on information flowing through their systems to make critical decisions. However, when that foundational data contains errors, inconsistencies, or gaps, the consequences ripple outward like waves from a stone thrown into still water. Each subsequent analysis, report, and decision built upon flawed upstream data becomes increasingly unreliable, creating a compounding effect that can devastate business outcomes.

Understanding how these upstream inaccuracies propagate through organizational systems has become essential for business leaders, data professionals, and risk managers alike. The challenge isn’t simply about correcting individual errors—it’s about recognizing how small data quality issues at the source can amplify into strategic missteps, financial losses, and reputational damage downstream.

🔍 The Hidden Origins of Data Quality Problems

Upstream data inaccuracies rarely announce themselves with fanfare. Instead, they enter organizational systems quietly through various pathways: manual data entry errors, outdated integration protocols, legacy system incompatibilities, or simply insufficient data validation at collection points. These entry points represent the “upstream” sources where data first enters the business ecosystem.

The problem intensifies because many organizations lack comprehensive data governance frameworks at these critical junctures. Without proper validation rules, standardization protocols, or quality checkpoints, erroneous data flows freely into databases, data warehouses, and analytical systems. Once embedded in these repositories, bad data becomes legitimized, trusted, and distributed throughout the organization.

Consider a customer relationship management system where sales representatives manually enter client information. A simple typo in an email address, an incorrectly formatted phone number, or a misspelled company name might seem trivial at the moment of entry. However, that single error will propagate through marketing automation systems, billing platforms, customer service databases, and analytics dashboards—each system treating the flawed data as truth.

Common Upstream Data Sources and Their Vulnerabilities

Different data sources present unique vulnerability profiles. Transactional systems processing high volumes of information may introduce errors through timing issues or incomplete transactions. IoT devices and sensors can transmit inaccurate readings due to calibration problems or environmental interference. Third-party data feeds might contain outdated information or follow incompatible formatting standards.

Human-mediated data entry remains one of the most error-prone sources. Research consistently shows that manual data entry can have error rates ranging from 1% to 5%, depending on complexity and context. When millions of records flow through these systems, even a 1% error rate translates to tens of thousands of corrupted data points influencing business decisions.

⚙️ The Amplification Mechanism: How Small Errors Become Big Problems

The ripple effect of upstream data inaccuracies operates through what systems theorists call “error propagation.” Each time flawed data passes through a transformation, aggregation, or analytical process, the original inaccuracy doesn’t just persist—it amplifies and multiplies its impact.

Imagine a manufacturing company where production quantity data contains a 2% systematic overestimation at the source. This seemingly minor discrepancy flows into inventory management systems, creating phantom stock that doesn’t actually exist. The inventory system then feeds purchasing algorithms, which under-order materials based on inflated stock levels. Simultaneously, the flawed production data influences demand forecasting models, distorting future production schedules. Financial systems calculate margins based on inaccurate production volumes, presenting misleading profitability metrics to leadership.

Within weeks, this single upstream inaccuracy cascades into material shortages, production delays, disappointed customers, and strategic decisions based on fundamentally flawed financial performance data. The 2% error at the source has amplified into operational chaos affecting multiple business functions.

Mathematical Compounding of Data Errors

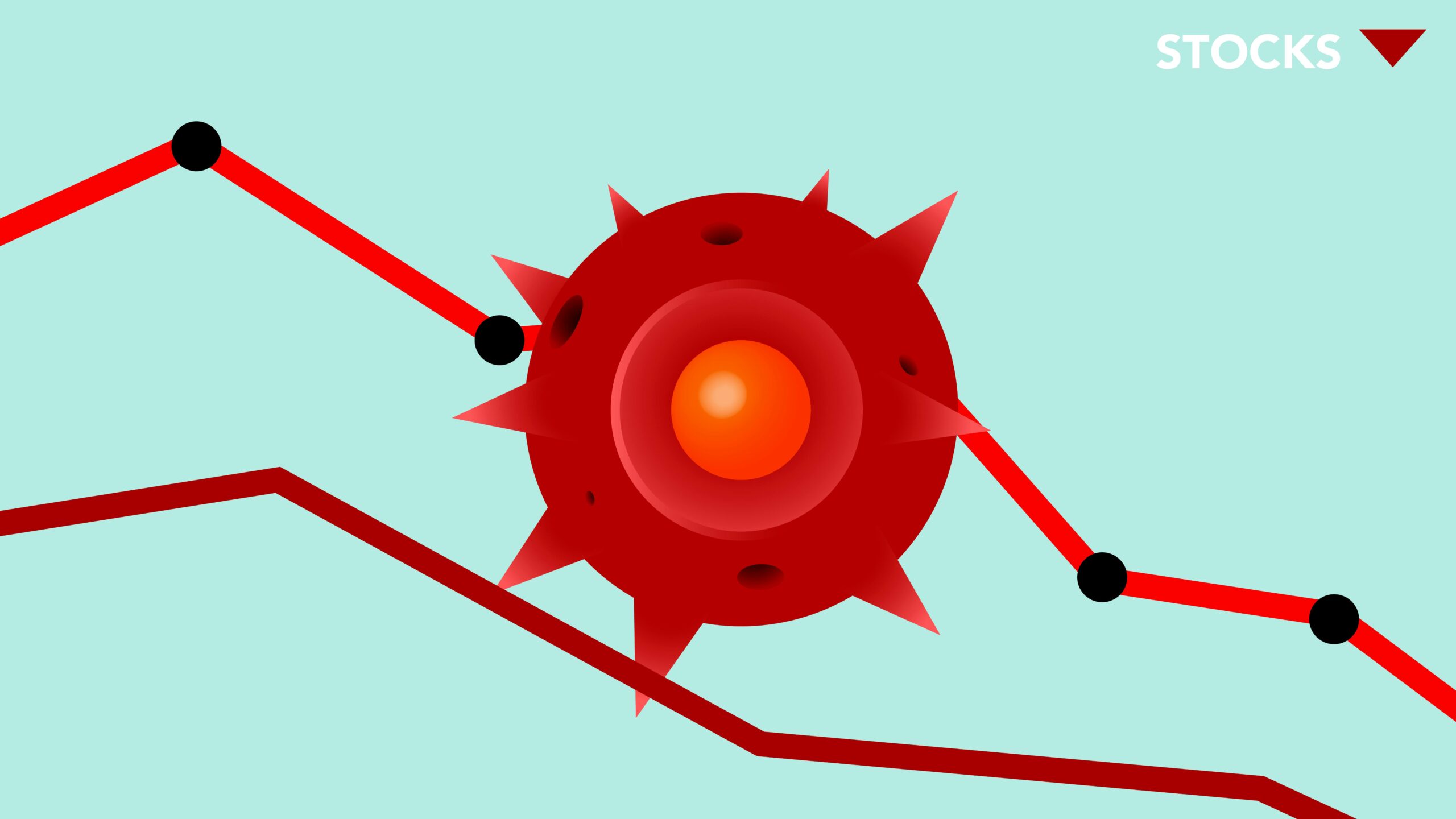

When multiple data sources with their own error rates combine in analytical processes, the cumulative inaccuracy can grow exponentially. If three data sources, each with a 3% error rate, feed into a single analytical model, the resulting output could have error rates significantly higher than 9% depending on how those errors interact and compound.

This mathematical reality makes downstream analytics increasingly unreliable. Business intelligence dashboards, predictive models, and automated decision systems all inherit and amplify the inaccuracies present in their source data. The further downstream an analysis sits from the original data sources, the more opportunities exist for error accumulation.

💼 Business Decision-Making Under the Shadow of Bad Data

Executives and managers make strategic decisions daily based on reports, dashboards, and analyses they trust to reflect business reality accurately. When upstream data inaccuracies contaminate these information sources, decision-makers operate with a distorted view of their organization’s performance, market position, and operational health.

The consequences manifest across all business functions. Marketing teams might allocate budgets toward campaigns targeting customer segments that don’t actually match their data profiles. Sales forecasts built on corrupted pipeline data lead to misaligned resource allocation and missed revenue targets. Product development teams might prioritize features based on inaccurate usage analytics, investing resources in directions that don’t serve actual customer needs.

Financial planning becomes particularly vulnerable to upstream data quality issues. Budget projections, cost analyses, and profitability assessments all depend on accurate operational data flowing from source systems. When that foundational data contains systematic errors, the resulting financial models can guide organizations toward strategically unsound decisions—expanding unprofitable product lines, under-investing in high-performing areas, or misidentifying cost-reduction opportunities.

The Confidence Problem in Data-Driven Cultures

Organizations that discover significant data quality issues face a secondary crisis: erosion of trust in data-driven decision-making itself. Once stakeholders recognize that their trusted dashboards and reports contain unreliable information, skepticism spreads. Managers begin second-guessing analytical insights, reverting to intuition-based decision-making, and questioning the value of data infrastructure investments.

This confidence crisis can paralyze organizations, creating debates about data reliability that delay critical decisions. Teams waste valuable time validating information that should be trusted, duplicating analytical work, and conducting manual audits to verify automated system outputs. The operational friction introduced by pervasive data quality concerns can negate many benefits organizations hoped to achieve through digital transformation initiatives.

⚠️ Quantifying Business Risks From Data Inaccuracies

The business risks stemming from upstream data inaccuracies extend beyond poor decisions into concrete financial, operational, compliance, and reputational domains. Understanding these risk categories helps organizations prioritize data quality initiatives and justify necessary investments in data governance infrastructure.

Financial risks manifest through direct monetary losses: incorrect billing, misallocated marketing spend, inefficient inventory management, and flawed pricing strategies. Organizations have reported millions in annual losses attributable to data quality issues, with some industry estimates suggesting that poor data quality costs businesses 15-25% of their revenue.

Operational risks emerge as data-driven automation systems make incorrect decisions at scale. Algorithmic trading systems can execute massive losing positions based on corrupted market data. Supply chain optimization platforms might route shipments inefficiently due to inaccurate location information. Customer service automation could frustrate clients by acting on outdated account information.

Compliance and Regulatory Exposure

Regulatory compliance represents an increasingly critical risk dimension. Financial services organizations must report accurate transaction data to regulators. Healthcare providers face strict requirements around patient information accuracy. Privacy regulations like GDPR mandate that organizations maintain accurate personal data and correct inaccuracies upon request.

When upstream data quality issues compromise compliance reporting, organizations face potential fines, legal action, and regulatory sanctions. Beyond direct penalties, compliance failures damage organizational reputation and erode stakeholder trust—consequences that can persist long after specific violations are remediated.

🛡️ Building Upstream Data Quality Defenses

Addressing the ripple effect requires organizations to shift their data quality focus upstream toward prevention rather than downstream toward detection and correction. This strategic reorientation emphasizes catching and correcting errors at their source before they enter organizational systems and begin propagating.

Implementing robust data validation at collection points represents the first line of defense. Systems should enforce data type requirements, format standards, and logical constraints before accepting information. Real-time validation provides immediate feedback to data entry personnel or connected systems, enabling corrections before flawed data persists in databases.

Standardization protocols ensure consistency across data sources. Establishing and enforcing common formats for dates, addresses, product codes, and other frequently used data elements prevents compatibility issues and reduces transformation errors as data moves between systems. Master data management practices create authoritative reference sources that maintain consistent entity definitions across the organization.

Automated Quality Monitoring and Alerting

Organizations need continuous visibility into data quality metrics across their upstream sources. Automated monitoring systems can track error rates, completeness levels, consistency patterns, and anomalous values in real-time. When quality thresholds are breached, automated alerts notify data stewards and system administrators, enabling rapid investigation and remediation.

These monitoring capabilities should encompass both technical data quality dimensions (format correctness, referential integrity, completeness) and business rule compliance (logical consistency, reasonable value ranges, temporal validity). By tracking quality metrics longitudinally, organizations can identify degrading data sources before quality issues reach critical levels.

📊 Creating Organizational Accountability for Data Quality

Technology solutions alone cannot solve upstream data quality challenges. Sustainable improvements require organizational structures, processes, and cultural elements that make data quality everyone’s responsibility while assigning clear accountability for specific data domains.

Data governance frameworks establish policies, standards, and procedures that guide how organizations create, maintain, and use data assets. These frameworks define data ownership, assigning specific individuals or teams responsibility for the quality of particular data sets. Data stewards act as champions for their assigned domains, monitoring quality, coordinating improvements, and serving as escalation points for quality issues.

Beyond formal governance structures, organizations must cultivate data quality awareness across their workforce. Employees at all levels need to understand how their data-related actions affect downstream processes and decisions. Training programs should emphasize the business impact of data quality, making the connection between seemingly minor errors and significant business consequences.

Incentivizing Quality at the Source

Performance management systems should incorporate data quality metrics for roles that create or manage upstream data. Customer service representatives, data entry personnel, sales teams, and others who directly contribute data to organizational systems need clear expectations around accuracy and completeness. When data quality becomes part of performance evaluations, individuals have concrete incentives to prioritize accuracy.

Recognition programs that celebrate data quality improvements can reinforce positive behaviors. Sharing success stories about prevented errors or quality initiatives that delivered business value helps demonstrate the tangible importance of data quality work and encourages broader organizational engagement.

🔄 Continuous Improvement Through Root Cause Analysis

When data quality issues emerge, organizations should resist the temptation to simply correct the immediate error and move forward. Each quality incident represents an opportunity to understand and address systemic vulnerabilities that allowed the error to occur and propagate.

Root cause analysis methodologies help organizations trace quality issues back to their origins. Rather than treating symptoms, these investigations identify the underlying process failures, system design flaws, or training gaps that enabled errors to enter upstream systems. Addressing root causes prevents recurrence and progressively strengthens the overall data quality posture.

Documentation of quality incidents, investigations, and remediation actions creates organizational learning. Over time, this knowledge base reveals patterns in data quality challenges, highlighting areas requiring systemic intervention rather than reactive fixes. Organizations can prioritize improvement initiatives based on empirical evidence about their most significant quality vulnerabilities and their business impact.

🚀 Transforming Data Quality From Cost Center to Competitive Advantage

Organizations that successfully address upstream data quality transform a defensive necessity into a strategic asset. Superior data quality enables faster, more confident decision-making. It reduces operational friction, allowing automation and analytics initiatives to deliver their full potential value. It minimizes waste from errors, rework, and missed opportunities.

In competitive markets, data quality can differentiate organizations. Companies with superior customer data can deliver more personalized experiences and more effective marketing. Organizations with accurate operational data can optimize processes more effectively, reducing costs and improving service delivery. Businesses with reliable financial data can respond more nimbly to market changes and opportunities.

The journey from reactive data quality management to proactive upstream quality assurance requires sustained commitment, appropriate investments, and cultural change. However, organizations that successfully make this transition position themselves to extract maximum value from their data assets while minimizing the risks that inaccurate data introduces into their operations and decisions.

Understanding the ripple effect of upstream data inaccuracies fundamentally changes how organizations approach data quality. Rather than viewing it as a technical problem to be solved by IT departments, it becomes recognized as a cross-functional business imperative affecting strategy, operations, compliance, and competitive positioning. By addressing data quality at its source and preventing errors from entering organizational systems, businesses can ensure that their data-driven decisions rest on a foundation of reliable, accurate information that truly reflects business reality.

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.