Data shapes every decision we make in business today. Yet the invisible practice of selective data inclusion threatens to undermine even the most sophisticated analytics strategies.

🔍 Understanding the Shadow Side of Data Selection

In an era where data-driven decision-making has become the gold standard, organizations collect massive volumes of information every day. But here’s the uncomfortable truth: not all data makes it into the final analysis. The process of choosing which data to include and exclude—whether intentional or accidental—creates hidden vulnerabilities that can distort reality and lead to costly mistakes.

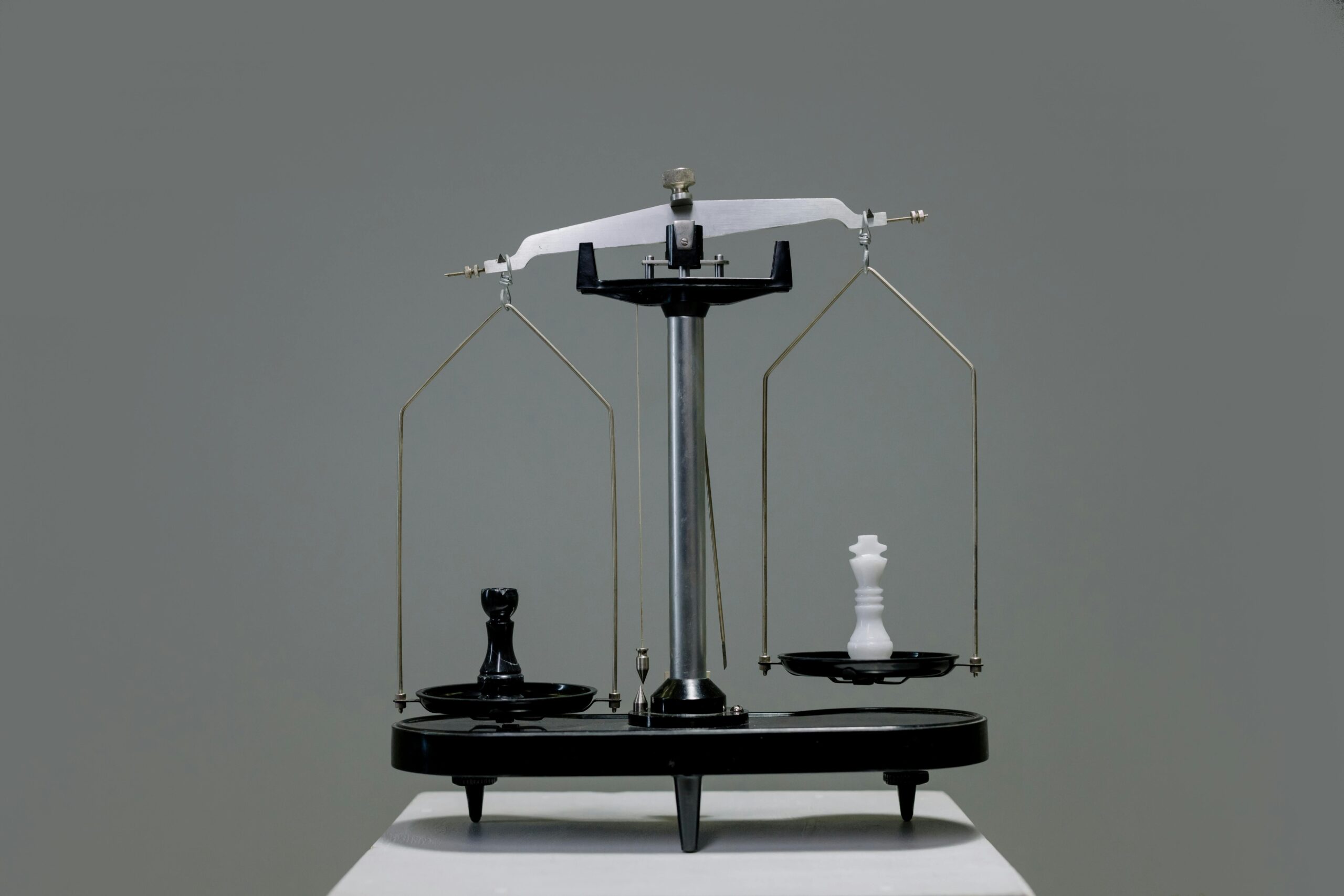

Selective data inclusion refers to the practice of incorporating only certain datasets, variables, or observations into analysis while omitting others. Sometimes this happens deliberately, driven by confirmation bias or organizational politics. Other times, it occurs unconsciously through technical limitations, accessibility issues, or simple oversight. Regardless of intent, the consequences remain the same: incomplete pictures that masquerade as comprehensive insights.

The challenge extends beyond simple data collection. Modern organizations deal with structured databases, unstructured social media feeds, IoT sensor readings, customer interactions, and countless other sources. Each decision about what to measure, what to store, and what to analyze creates potential blind spots that accumulate over time.

💼 Why Organizations Fall Into the Selection Trap

Several psychological and organizational factors drive selective data practices. Understanding these root causes helps businesses recognize and address the problem before it undermines decision quality.

Confirmation Bias in Corporate Culture

Teams naturally gravitate toward information that validates existing beliefs and strategies. When quarterly results need to impress stakeholders, analysts might unconsciously emphasize positive metrics while downplaying concerning trends. This creates echo chambers where leadership receives filtered versions of reality that reinforce predetermined conclusions.

Marketing departments might focus exclusively on engagement metrics that show growth, ignoring customer churn data that tells a different story. Product teams could prioritize feature usage statistics from power users while neglecting feedback from the silent majority who struggle with basic functionality.

Technical and Resource Constraints

Not every organization possesses the infrastructure to capture and process all relevant data. Legacy systems might exclude valuable information simply because integration proves too expensive or complex. Data silos across departments create artificial boundaries that prevent holistic analysis.

Small businesses particularly struggle with this challenge. Limited budgets force difficult choices about which analytics tools to implement and which data sources to prioritize. These practical limitations inevitably shape what gets measured and analyzed.

The Convenience Factor

Human nature favors easily accessible information over data that requires significant effort to obtain. Analysts work with readily available datasets rather than pursuing harder-to-reach information that might provide more accurate insights. This path of least resistance systematically biases analysis toward convenience rather than comprehensiveness.

Digital data flows automatically into databases, while crucial qualitative feedback from frontline employees might exist only in scattered emails or verbal reports. The disparity in accessibility means quantitative metrics often dominate decision-making regardless of whether they tell the complete story.

🚨 Real-World Consequences of Incomplete Data Pictures

The impact of selective data inclusion extends far beyond theoretical concerns. Organizations across industries have experienced tangible consequences when critical information disappeared from their analytical frameworks.

Strategic Miscalculations

Retail chains have opened stores in seemingly promising locations based on demographic data and traffic patterns, only to fail because they ignored local competition data or cultural factors that didn’t fit neatly into spreadsheets. Financial institutions have approved loans using incomplete risk profiles, leading to default rates far exceeding projections.

Product launches built on selective customer feedback have bombed in markets because companies listened only to their most vocal advocates while ignoring the silent concerns of potential mainstream buyers. These failures share a common thread: decisions made on partial information presented as complete analysis.

Compliance and Legal Vulnerabilities

Regulatory environments increasingly require organizations to demonstrate comprehensive data practices. Selective reporting can trigger investigations, fines, and reputational damage. Healthcare providers face severe penalties when patient outcome data appears cherry-picked to inflate performance metrics.

Financial services organizations must prove their risk assessments consider all material factors. Excluding inconvenient data points doesn’t just compromise decision quality—it creates legal liability that can threaten organizational survival.

Eroded Stakeholder Trust

When selective data practices eventually come to light, the damage to credibility can prove irreparable. Employees lose faith in leadership that makes decisions based on incomplete information. Customers abandon brands caught manipulating data narratives. Investors flee companies whose reported metrics don’t align with underlying reality.

Rebuilding trust after data integrity breaches requires years of transparent practice. The short-term gains from favorable but incomplete data never justify the long-term cost to organizational reputation.

🛡️ Building Robust Frameworks for Data Integrity

Protecting against selective data inclusion requires systematic approaches that institutionalize comprehensive practices rather than relying on individual vigilance.

Establishing Clear Data Governance Policies

Organizations need explicit protocols defining what data gets collected, how it’s processed, and what criteria justify exclusions. These policies should be documented, accessible, and regularly reviewed by cross-functional teams that represent diverse perspectives.

Effective governance frameworks include audit trails that track data lineage from collection through analysis. When data gets excluded, the reasons should be documented and reviewable. This transparency creates accountability that discourages convenient omissions.

Data governance committees should include members from various departments and levels. This diversity helps identify blind spots that homogeneous groups might miss. Regular governance reviews ensure policies evolve alongside changing business needs and technological capabilities.

Implementing Systematic Quality Checks

Automated validation processes can flag potential selection issues before they influence decisions. Statistical techniques identify unusual patterns that might indicate missing data categories. Peer review processes create multiple sets of eyes examining analytical approaches.

Quality frameworks should include both technical validation and business logic reviews. Technical checks ensure data completeness at the mechanical level, while business reviews assess whether the included information adequately represents the phenomena being studied.

Creating Psychological Safety for Dissent

Teams must feel empowered to challenge analytical approaches without fear of retaliation. When junior analysts can question senior leaders about excluded data, organizations benefit from bottom-up quality control that catches problems early.

This cultural shift requires explicit commitment from leadership. Executives should publicly reward people who identify data gaps and challenge incomplete analyses, even when the findings prove inconvenient. These visible endorsements establish norms that value accuracy over comfort.

📊 Practical Strategies for Comprehensive Data Inclusion

Beyond governance frameworks, organizations can implement specific practices that systematically reduce selection bias and improve data comprehensiveness.

The Inverted Analysis Approach

Start by identifying what conclusions you hope to avoid, then actively seek data that might support those unwanted findings. This counterintuitive method forces teams to confront information they might naturally overlook. If the avoided conclusions prove unsupported by comprehensive data, confidence in the actual findings increases.

Product teams might ask: “What data would prove our new feature is failing?” Then they actively collect and analyze that information alongside positive metrics. Marketing departments could seek evidence that campaigns aren’t working before celebrating apparent successes.

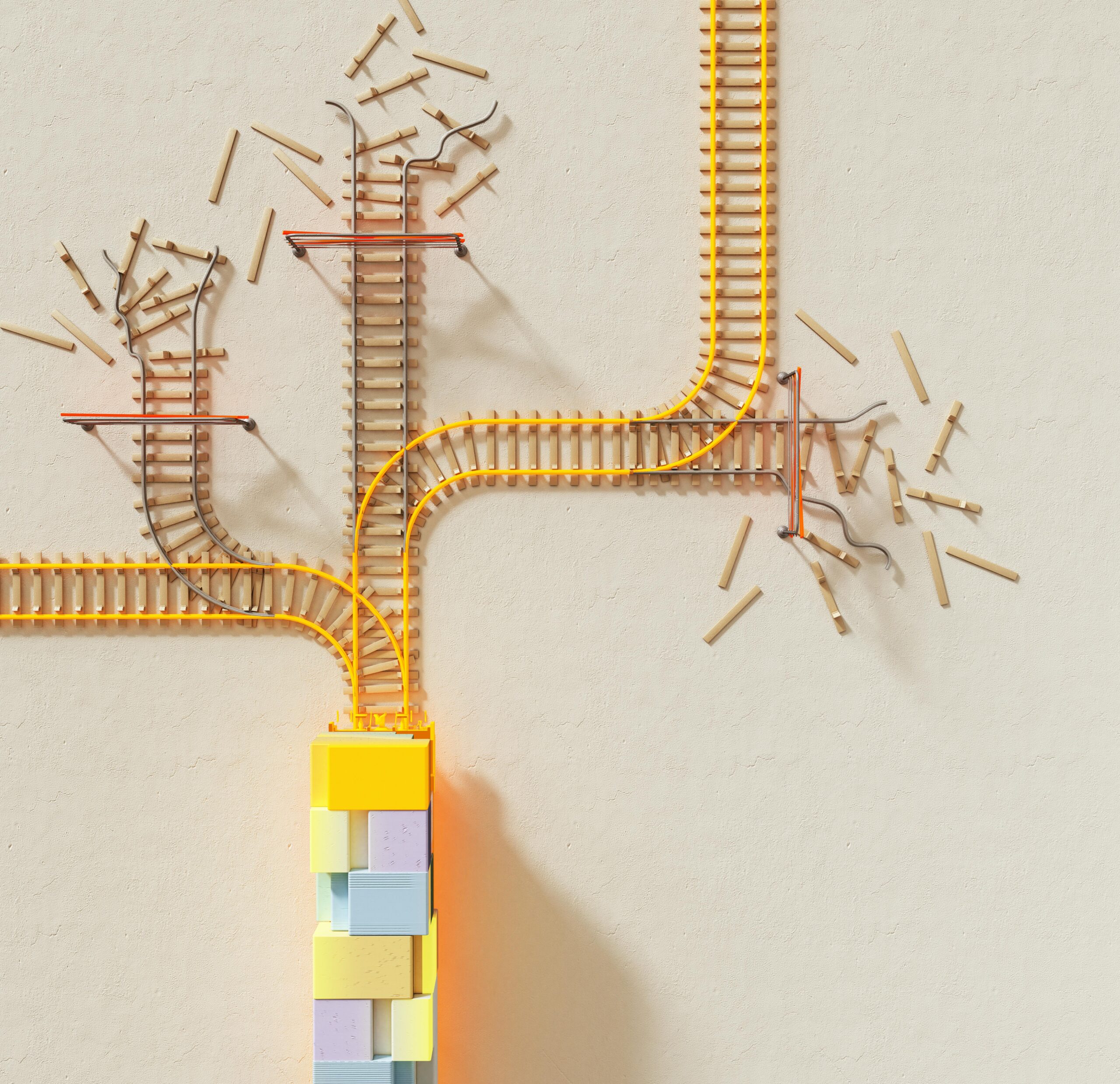

Structured Data Source Mapping

Create visual inventories of all potential data sources relevant to key decisions. Map what’s currently included in analysis versus what exists but remains untapped. This exercise reveals systematic gaps and helps prioritize integration efforts.

These maps should distinguish between data that’s truly unavailable versus information that’s simply inconvenient to access. The latter category represents low-hanging fruit for improving analytical comprehensiveness without major infrastructure investments.

Diversity in Analytical Teams

Homogeneous teams share similar blind spots. Building diverse analytics groups—across demographics, functional backgrounds, and thinking styles—naturally incorporates varied perspectives that question exclusions others might not notice.

Someone with operations experience will think about data differently than a pure statistician. Marketing professionals identify customer information gaps that engineers might miss. This cognitive diversity serves as organic quality control against selective practices.

Regular Red Team Reviews

Designate rotating team members to specifically challenge analyses and identify excluded data that might change conclusions. These “red team” reviewers adopt an adversarial mindset, attempting to poke holes in proposed insights before they influence major decisions.

The red team role should rotate to prevent both burnout and the perception that certain people are just contrarians. Everyone takes turns defending their work and challenging others, normalizing rigorous scrutiny as standard practice rather than personal criticism.

🔧 Technology Tools That Support Comprehensive Analysis

Modern technology offers powerful capabilities for managing data comprehensiveness, though tools alone never substitute for good governance and culture.

Automated Data Quality Platforms

Specialized software can monitor data pipelines for completeness, flag unusual gaps, and ensure consistent collection across sources. These platforms establish baselines for expected data volumes and characteristics, triggering alerts when patterns deviate in ways suggesting selective capture or processing.

Machine learning algorithms can even predict what data should exist based on related information, identifying probable gaps that human reviewers might miss. These predictive approaches transform data quality from reactive fixes to proactive prevention.

Integrated Analytics Environments

Platforms that consolidate multiple data sources into unified environments reduce the friction that drives convenience-based selection. When analysts can access diverse information through single interfaces, they’re more likely to incorporate comprehensive perspectives.

Cloud-based solutions particularly excel at breaking down traditional data silos. Information from sales, operations, customer service, and external sources can flow into common repositories that enable holistic analysis without extensive integration projects.

Visualization Tools That Reveal Gaps

Advanced visualization platforms can make data absences visible. Heat maps might show which customer segments lack representation in feedback databases. Timeline charts can reveal temporal gaps where collection paused or sources went offline.

These visual approaches leverage human pattern recognition abilities to spot incompleteness that might hide in traditional reports. When gaps become visible, they demand explanation and remediation in ways that purely statistical approaches might not trigger.

🎯 Transforming Data Challenges Into Competitive Advantages

Organizations that master comprehensive data practices don’t just avoid pitfalls—they gain significant advantages over competitors who remain trapped in selective patterns.

More Accurate Market Understanding

Companies that incorporate complete customer data—including inconvenient feedback—develop products and services that better match actual market needs. They identify opportunities that competitors miss because they’re looking at fuller pictures of customer behavior and preferences.

This accuracy compounds over time. Each decision based on comprehensive data positions the organization slightly better than alternatives built on partial information. Years of systematically better choices create substantial competitive separation.

Enhanced Agility and Adaptation

Organizations accustomed to confronting complete data adapt faster when markets shift. They’ve already developed muscles for incorporating uncomfortable information rather than dismissing signals that don’t match expectations. This psychological preparedness translates to operational agility.

When disruption arrives, comprehensive data practitioners recognize threats earlier because they’re monitoring broader information landscapes. They respond more effectively because their decision frameworks already account for complexity rather than oversimplified narratives.

Stronger Stakeholder Relationships

Transparency about data practices builds trust with employees, customers, investors, and regulators. Organizations known for rigorous analytical integrity attract better talent, more loyal customers, and more patient capital. These stakeholder advantages create virtuous cycles that reinforce competitive positions.

Customers increasingly scrutinize how companies use data. Those who demonstrate comprehensive, ethical practices differentiate themselves in markets where privacy and integrity matter. This reputation becomes a asset that influences purchasing decisions and brand perception.

🌟 Cultivating Long-Term Data Excellence

Sustainable protection against selective data inclusion requires ongoing commitment rather than one-time initiatives. Organizations must embed comprehensive practices into their cultural DNA.

Continuous Education and Awareness

Regular training helps teams recognize selection bias in themselves and others. Case studies of internal and external failures illustrate consequences in memorable ways. Ongoing education prevents complacency and keeps data integrity top of mind.

These programs should evolve beyond technical training to address the psychological and organizational dynamics that drive selective practices. Understanding why humans naturally cherry-pick data helps individuals develop personal countermeasures.

Metrics That Measure Comprehensiveness

What gets measured gets managed. Organizations should track indicators of data completeness: percentage of identified sources actually integrated, time lag between data creation and analysis availability, frequency of analytical revisions due to new information, and stakeholder confidence in data quality.

These metrics shouldn’t become targets that drive gaming behavior, but rather diagnostic tools that reveal where investments in comprehensiveness deliver the greatest returns. Regular reviews identify improvement opportunities and celebrate progress.

Leadership Modeling

Executives who publicly acknowledge when they initially overlooked important data demonstrate that changing conclusions based on new information represents strength, not weakness. These visible examples establish norms that permeate organizational culture.

When leaders ask “what aren’t we seeing?” in strategy sessions, they signal that comprehensive analysis matters more than convenient answers. This consistent messaging from the top shapes behavior throughout the organization.

💡 The Path Forward: From Pitfall to Possibility

Selective data inclusion will never completely disappear. Human cognition, practical constraints, and organizational dynamics ensure some level of incompleteness in every analysis. The goal isn’t perfection but rather systematic reduction of selection bias through deliberate practices and sustained attention.

Organizations that recognize this challenge as ongoing rather than solvable position themselves for continuous improvement. They build feedback loops that reveal new gaps as business contexts evolve. They invest in capabilities that expand what’s feasible to measure and analyze.

Most importantly, they cultivate humility about what they know and don’t know. This intellectual honesty creates space for discovering uncomfortable truths that ultimately drive better decisions. The companies that thrive tomorrow will be those who see complete pictures today, even when those pictures reveal uncomfortable realities.

The journey toward comprehensive data practices demands investment, discipline, and courage. But the alternative—making critical decisions based on convenient fictions—poses far greater risks. As data continues shaping competitive advantage, the ability to navigate beyond selective inclusion separates winners from casualties in an increasingly complex business landscape.

Start today by asking: what data are we not seeing, and what might it tell us? That single question, asked consistently and answered honestly, begins the transformation from selective data inclusion to comprehensive insight that drives genuinely smarter decisions. Your organization’s future depends on the courage to see completely rather than selectively.

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.