System-wide reliability degradation silently threatens our digital infrastructure, creating cascading failures that impact businesses, governments, and everyday users across the globe. 🌐

In an era where technology underpins virtually every aspect of modern life, the stability and dependability of our interconnected systems have never been more critical. From cloud computing platforms to financial networks, healthcare systems to supply chain management, a single point of failure can trigger a domino effect that reverberates throughout entire ecosystems. Understanding and addressing this ripple effect has become paramount for organizations seeking to build resilient, future-proof infrastructure.

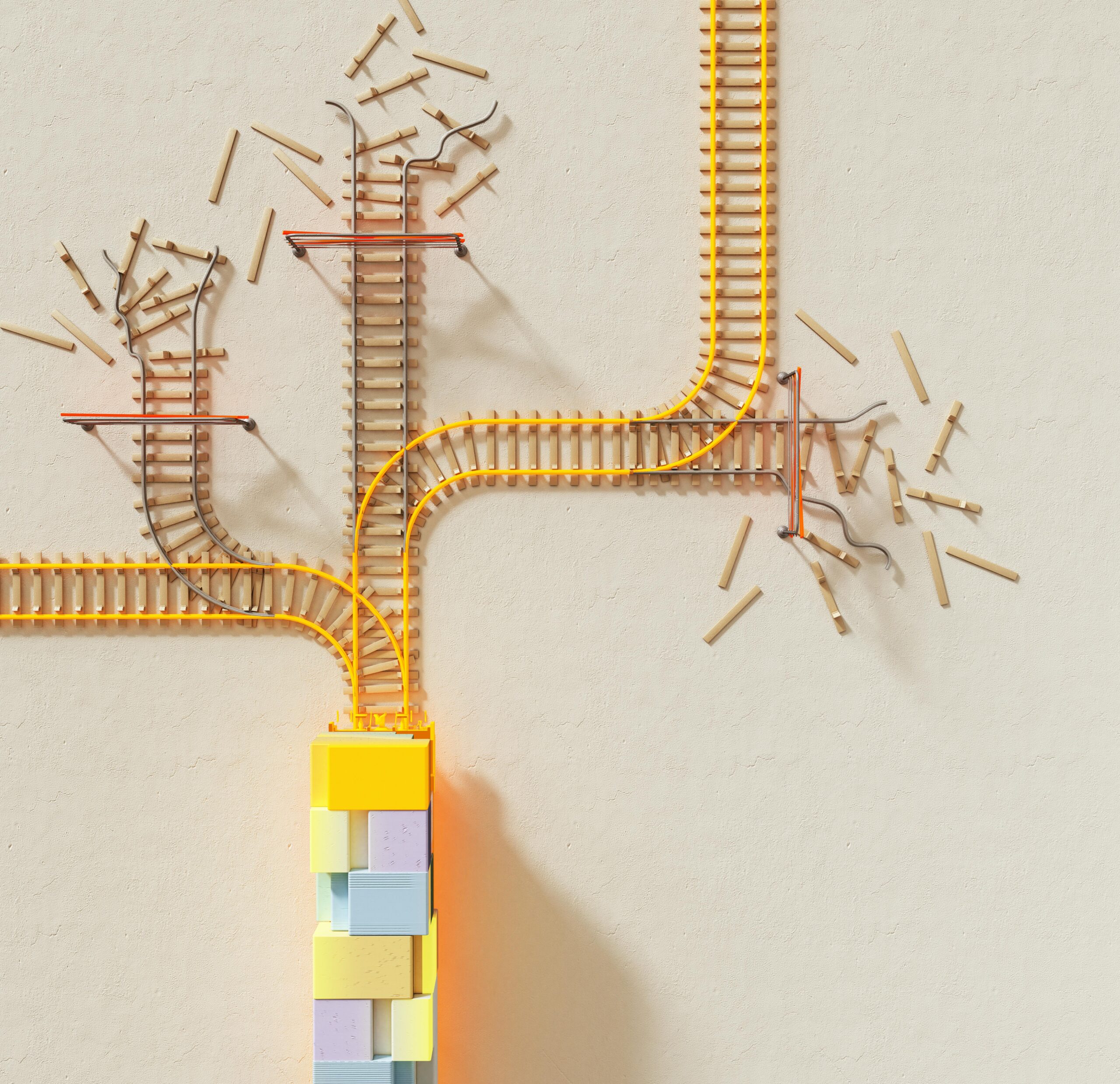

The complexity of today’s technological landscape means that reliability issues rarely exist in isolation. When one component degrades, it creates stress on connected systems, potentially leading to widespread disruption. This phenomenon challenges traditional approaches to system maintenance and demands a more holistic, proactive strategy for ensuring operational continuity.

🔍 Understanding the Anatomy of System-Wide Degradation

System-wide reliability degradation occurs when performance issues in one part of an infrastructure spread to affect other components, creating a cascading effect that compounds the original problem. Unlike isolated failures that can be quickly contained and resolved, these ripple effects pose unique challenges because they’re often unpredictable and can manifest in unexpected ways.

The root causes of these degradation patterns typically stem from several interconnected factors. Increased load on shared resources, configuration drift, accumulated technical debt, and inadequate capacity planning all contribute to a gradual erosion of system performance. What starts as a minor slowdown in one service can quickly snowball into widespread latency issues, timeouts, and ultimately complete service disruptions.

Modern architectures, particularly microservices and distributed systems, amplify these challenges. While these designs offer tremendous scalability and flexibility, they also create numerous points of potential failure. A single slow-responding service can hold up dozens of dependent services, each waiting for responses that never arrive in time. This interconnectedness means that reliability is only as strong as the weakest link in the chain.

The Hidden Costs of Degradation

The financial impact of system-wide reliability issues extends far beyond immediate downtime costs. Organizations face revenue loss from unavailable services, customer churn due to poor experiences, regulatory penalties for service level agreement violations, and significant engineering resources diverted to firefighting rather than innovation.

Reputation damage represents another critical but often underestimated cost. In today’s hyperconnected world, news of outages spreads instantly through social media and news outlets. Customer trust, painstakingly built over years, can evaporate within hours when systems fail repeatedly or unpredictably.

⚡ Identifying Early Warning Signs Before Crisis Strikes

Proactive monitoring represents the first line of defense against system-wide degradation. Organizations must develop sophisticated observability practices that go beyond traditional monitoring to provide deep insights into system behavior and health.

Key indicators of impending reliability issues include gradual increases in response times, growing error rates that haven’t yet triggered alerts, increasing memory consumption patterns, and unusual traffic patterns that suggest capacity limits are being approached. These subtle signals, when detected early, provide crucial time to intervene before minor issues escalate into major incidents.

Effective early detection requires comprehensive instrumentation across all system layers. Application performance monitoring, infrastructure metrics, log aggregation, and distributed tracing must work together to provide a complete picture of system health. This holistic view enables teams to correlate seemingly unrelated symptoms and identify the true root causes of degradation.

Building Robust Monitoring Frameworks

A mature monitoring strategy encompasses multiple dimensions of system health. Resource utilization metrics track CPU, memory, disk, and network consumption. Application-level metrics monitor request rates, error rates, and latency distributions. Business metrics connect technical performance to actual user impact and revenue implications.

The following critical metrics should be continuously monitored across distributed systems:

- Latency percentiles: Track P50, P95, and P99 response times to identify degradation affecting different user segments

- Error budgets: Monitor remaining error budget to balance reliability with innovation velocity

- Saturation metrics: Measure how full services are, predicting when capacity limits will be reached

- Traffic patterns: Analyze request volumes and patterns to anticipate scaling needs

- Dependency health: Monitor external dependencies and their impact on overall system reliability

🛡️ Architectural Strategies for Resilience

Building systems that gracefully handle degradation requires intentional architectural decisions from the earliest design phases. Resilience cannot be bolted on as an afterthought; it must be woven into the fabric of system architecture.

Circuit breaker patterns protect systems from cascading failures by detecting when downstream services become unhealthy and temporarily routing around them. When a service begins failing, the circuit breaker opens, preventing additional requests from overwhelming the struggling component and giving it time to recover. This pattern prevents failure propagation and maintains partial functionality even when some components are unavailable.

Bulkhead isolation separates system resources so that failures in one area cannot consume resources needed by other areas. Just as watertight compartments on ships prevent a single breach from sinking the entire vessel, bulkhead patterns ensure that problems remain contained. This might involve separate thread pools for different operations, dedicated database connections for critical functions, or isolated compute resources for high-priority workloads.

Embracing Graceful Degradation

Systems designed with graceful degradation in mind continue providing core functionality even when non-essential features fail. This approach prioritizes critical user journeys and ensures that temporary issues don’t result in complete service unavailability.

Implementing graceful degradation requires careful prioritization of features and functionality. Teams must identify which capabilities are absolutely essential and which can be temporarily disabled or simplified during incidents. For example, an e-commerce platform might disable product recommendations but keep checkout functionality working, or a social media platform might limit video uploads while maintaining text posting capabilities.

🔧 Operational Excellence Through Chaos Engineering

Chaos engineering represents a paradigm shift in how organizations approach reliability. Rather than waiting for failures to occur in production, chaos engineering proactively introduces controlled failures to identify weaknesses and validate that systems behave as expected under stress.

The practice involves systematically experimenting on distributed systems to build confidence in their ability to withstand turbulent conditions. Teams formulate hypotheses about how systems should behave, introduce variables that could cause failures, and observe the results. This scientific approach to reliability uncovers hidden dependencies, single points of failure, and incorrect assumptions about system behavior.

Starting with chaos engineering requires careful planning and gradual escalation. Initial experiments should be conducted in non-production environments with well-defined blast radius limits. As confidence grows, experiments can expand to production systems during low-traffic periods, eventually becoming part of regular operational practices.

Game Days and Disaster Recovery Drills

Regular game days simulate realistic incident scenarios, allowing teams to practice response procedures in low-pressure environments. These exercises validate runbooks, test communication channels, and identify gaps in incident response capabilities before real emergencies occur.

Effective game days recreate the stress and confusion of actual incidents without the consequences. Teams practice coordinating across organizational boundaries, making decisions with incomplete information, and communicating with stakeholders during crises. The lessons learned from these exercises directly improve real incident response effectiveness.

📊 Data-Driven Decision Making for Reliability

Sustained reliability improvements require objective measurement and data-driven decision making. Service level objectives (SLOs) and error budgets provide frameworks for balancing reliability with innovation velocity, ensuring that systems meet user expectations without unnecessarily constraining development teams.

SLOs quantify the level of reliability that users actually need, as opposed to pursuing unrealistic perfection. By defining specific, measurable targets for availability, latency, and other key metrics, organizations create shared understanding of reliability goals across engineering, product, and business teams.

Error budgets operationalize SLOs by calculating the allowable amount of unreliability within a given time period. When systems operate within their error budget, teams have freedom to move quickly and take calculated risks. When error budgets are exhausted, focus shifts entirely to reliability improvements until systems return to acceptable performance levels.

Implementing Effective SLO Frameworks

Successful SLO implementation begins with understanding user expectations and business requirements. SLOs should be defined based on actual user impact rather than arbitrary technical metrics. A 99.9% availability target might sound impressive, but if users experience the system as unavailable due to unacceptable latency, the availability metric becomes meaningless.

The following table illustrates how different reliability targets translate to allowable downtime:

| Availability Target | Downtime Per Year | Downtime Per Month | Downtime Per Week |

|---|---|---|---|

| 99% (two nines) | 3.65 days | 7.31 hours | 1.68 hours |

| 99.9% (three nines) | 8.77 hours | 43.8 minutes | 10.1 minutes |

| 99.99% (four nines) | 52.6 minutes | 4.38 minutes | 1.01 minutes |

| 99.999% (five nines) | 5.26 minutes | 26.3 seconds | 6.05 seconds |

🚀 Automation and Self-Healing Systems

Manual intervention in complex distributed systems cannot keep pace with the speed and scale of modern infrastructure. Automation and self-healing capabilities enable systems to detect and resolve common issues without human intervention, dramatically reducing mean time to recovery and preventing minor issues from escalating.

Auto-scaling dynamically adjusts resource allocation based on demand, preventing capacity-related degradation before it impacts users. When traffic increases, systems automatically provision additional compute resources. When demand decreases, resources scale down to optimize costs. This elastic capacity ensures consistent performance across varying load conditions.

Automated remediation handles common failure scenarios through predefined playbooks. When monitoring systems detect specific conditions, automated responses can restart failing services, clear caches, rotate credentials, or trigger failover to backup systems. These automated responses eliminate the delays inherent in human incident detection and response.

The Role of AI and Machine Learning

Advanced machine learning models enhance reliability by predicting failures before they occur and automatically optimizing system configuration. Anomaly detection algorithms identify unusual patterns that might indicate impending issues, while predictive models forecast capacity needs and potential bottlenecks.

AI-driven operations analyze vast amounts of telemetry data to identify patterns invisible to human operators. These systems learn normal behavior baselines and alert when deviations suggest problems. Over time, machine learning models become increasingly sophisticated at distinguishing genuine issues from benign anomalies, reducing alert fatigue and improving signal-to-noise ratios.

🤝 Fostering a Culture of Reliability

Technology alone cannot deliver sustained reliability improvements. Organizational culture, incentive structures, and collaboration patterns profoundly impact system reliability. Building a reliability-first culture requires intentional effort across multiple dimensions.

Blameless post-mortems transform incidents from finger-pointing exercises into learning opportunities. When teams feel safe discussing failures openly, they share valuable insights that prevent future incidents. This psychological safety encourages transparency, enabling organizations to address systemic issues rather than scapegoating individuals.

Shared on-call responsibilities ensure that engineers who build systems also experience their operational challenges. This connection between development and operations naturally incentivizes building more reliable, maintainable systems. Engineers who respond to 3 AM pages caused by their own code quickly learn to prioritize operational excellence.

Cross-Functional Collaboration Models

Breaking down silos between development, operations, security, and other functions accelerates reliability improvements. Site reliability engineering (SRE) teams embedded within product teams ensure that reliability considerations influence design decisions from the earliest stages. Regular knowledge sharing sessions, rotation programs, and collaborative incident response reinforce these cross-functional connections.

🌟 Building Tomorrow’s Resilient Infrastructure Today

The journey toward truly resilient systems requires sustained commitment, continuous learning, and willingness to challenge conventional approaches. Organizations that invest in reliability engineering today position themselves for success in an increasingly complex technological landscape.

Emerging technologies bring both opportunities and challenges for system reliability. Edge computing, serverless architectures, and multi-cloud strategies introduce new failure modes while enabling new resilience patterns. Staying ahead requires ongoing education, experimentation, and adaptation as the technology landscape evolves.

The most successful organizations view reliability not as a destination but as a continuous journey. They establish feedback loops that capture lessons from incidents, measure the effectiveness of improvements, and constantly refine their approaches. This commitment to continuous improvement creates virtuous cycles where each incident strengthens overall system resilience.

Tackling system-wide reliability degradation demands a comprehensive approach spanning technology, processes, and culture. By implementing robust monitoring, architecting for resilience, embracing chaos engineering, leveraging data-driven decision making, automating remediation, and fostering reliability-focused culture, organizations build the strong, smart infrastructure needed for tomorrow’s challenges. The ripple effects of these investments extend far beyond preventing outages, creating competitive advantages through superior user experiences, operational efficiency, and organizational agility.

As systems grow increasingly complex and interdependent, the stakes for reliability continue rising. Organizations that master these principles don’t just prevent failures—they build trust, enable innovation, and create sustainable foundations for long-term success in our digitally-driven world. 🎯

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.