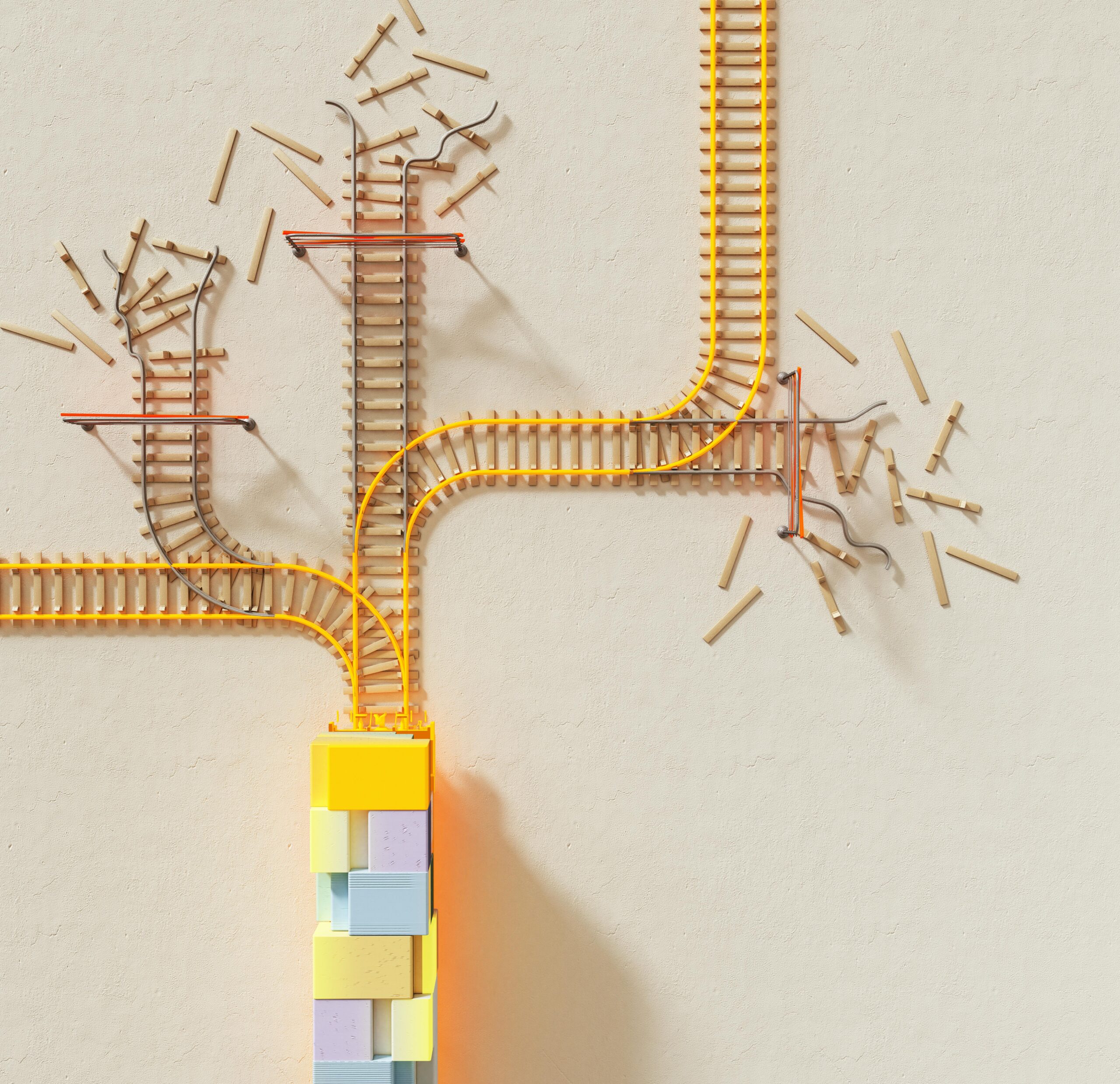

Machine learning systems built on interconnected models face a critical challenge: compounding errors that spiral through dependencies, threatening reliability and performance in production environments.

🔄 Understanding the Cascade Effect in Model Dependencies

Modern artificial intelligence solutions rarely operate in isolation. Instead, they exist as intricate ecosystems where multiple models work together, each processing outputs from previous stages. This architecture creates powerful capabilities but introduces a fundamental vulnerability: when one model makes an error, subsequent models in the chain inherit and often amplify that mistake.

The phenomenon resembles a game of telephone where each participant adds their own interpretation. In machine learning pipelines, this compounding effect can transform minor inaccuracies into catastrophic failures. Understanding this spiral is the first step toward building more resilient systems that deliver consistent results across diverse scenarios.

📊 The Anatomy of Error Propagation

Error propagation in dependent models follows predictable patterns. When an upstream model misclassifies an input with 90% confidence, downstream models treat this incorrect classification as ground truth. The second model then processes this flawed input, potentially adding its own 10% error rate, resulting in compounded inaccuracy that grows exponentially through the pipeline.

Primary Sources of Compounding Errors

Several factors contribute to this escalating problem. Data drift occurs when the statistical properties of input data change over time, causing models trained on historical datasets to perform poorly on current information. Distribution shifts between training and production environments create mismatches that early-stage models fail to handle gracefully, passing corrupted representations downstream.

Model interdependence creates tight coupling where specialized models depend on specific output formats and confidence distributions from their predecessors. When upstream models undergo updates or retraining, these dependencies can break silently, introducing errors that remain undetected until they manifest as user-facing failures.

🎯 Quantifying the Compounding Problem

Mathematical analysis reveals the severity of cascading errors. Consider a pipeline with three sequential models, each achieving 95% individual accuracy. Intuitively, one might expect the combined system to perform near this benchmark. However, the actual compound accuracy approximates 85.7% (0.95³), representing a significant degradation that worsens with pipeline length.

This calculation assumes independence between errors, but real-world scenarios often exhibit correlation. When upstream mistakes systematically confuse downstream models, the degradation accelerates beyond simple multiplication. Certain error types propagate more aggressively than others, creating vulnerability hotspots in the dependency chain.

Real-World Impact Across Industries

Healthcare diagnostic systems demonstrate these risks vividly. An image preprocessing model that incorrectly normalizes medical scans can cause subsequent disease detection models to miss critical findings. The compounding effect transforms a minor preprocessing error into a potentially life-threatening diagnostic failure.

Autonomous vehicle systems face similar challenges. Sensor fusion models combine data from cameras, lidar, and radar. When object detection models misidentify pedestrians as static objects, downstream path planning models may make dangerous navigation decisions based on this flawed perception.

🛠️ Architectural Strategies for Error Mitigation

Designing robust pipelines requires intentional architectural choices that anticipate and contain error propagation. Circuit breaker patterns borrowed from software engineering provide one effective approach. These mechanisms monitor model outputs for anomalies and halt processing when confidence scores drop below acceptable thresholds, preventing low-quality predictions from contaminating downstream stages.

Implementing Confidence-Aware Pipelines

Traditional pipelines treat all predictions equally, but confidence-aware architectures propagate uncertainty measurements alongside predictions. When an upstream model expresses low confidence, downstream models can adjust their processing strategies, applying more conservative thresholds or triggering human review workflows.

Bayesian approaches excel in this domain by maintaining probability distributions rather than point estimates. Each model in the chain updates these distributions, providing downstream consumers with rich information about prediction uncertainty. This transparency enables more informed decision-making at every stage.

🔍 Monitoring and Detection Frameworks

Identifying compounding errors requires comprehensive monitoring that extends beyond individual model metrics. End-to-end performance tracking captures the cumulative impact of cascading failures, while intermediate checkpoints reveal where degradation begins.

Anomaly detection systems should monitor not just prediction accuracy but also the statistical properties of inter-model data flows. Sudden shifts in feature distributions or confidence score patterns often signal the onset of compounding problems before they manifest as obvious failures.

Key Metrics for Dependency Health

Effective monitoring tracks several critical indicators. Error correlation coefficients measure whether mistakes cluster together, suggesting systematic problems rather than random noise. Prediction stability metrics assess how much outputs vary with minor input perturbations, revealing brittleness in the pipeline.

Confidence calibration curves ensure that model certainty estimates reflect actual accuracy. Poorly calibrated models that express high confidence in incorrect predictions cause especially severe compounding effects, as downstream systems trust these flawed inputs without appropriate skepticism.

🔧 Remediation Techniques for Existing Pipelines

Organizations with established model chains need practical strategies for reducing compounding errors without complete architectural overhauls. Ensemble methods introduce redundancy by running multiple parallel models and aggregating their outputs, reducing the impact of individual model failures.

Feedback Loops and Corrective Mechanisms

Implementing feedback connections allows downstream models to signal disagreement to upstream components. When a late-stage model detects inconsistencies in its inputs, it can trigger reprocessing with adjusted parameters or alternative model versions. This self-correcting capability prevents errors from accumulating unchecked.

Attention mechanisms provide another powerful tool. Rather than blindly accepting upstream outputs, models can learn to selectively focus on reliable portions of their inputs while discounting suspect information. This selective trust reduces vulnerability to upstream errors.

📈 Training Strategies for Robust Dependencies

The training process itself offers opportunities to build resilience against compounding errors. Traditional approaches train each model independently on clean, curated datasets. However, this creates fragility when models encounter the messy, error-prone outputs of real production systems.

Adversarial Training for Pipeline Resilience

Training downstream models on intentionally corrupted inputs from upstream components builds immunity to common error patterns. This adversarial approach exposes models to the types of mistakes they will encounter in production, teaching them to maintain performance despite imperfect inputs.

Joint training of connected models optimizes the entire pipeline simultaneously rather than treating components as independent units. This holistic approach naturally discovers architectures where individual models complement each other’s weaknesses, creating emergent robustness against error propagation.

🌐 Human-in-the-Loop Integration Points

Strategic placement of human oversight provides crucial safeguards against runaway error compounding. Rather than reviewing every prediction, efficient designs identify high-risk cases where model confidence is low or where predictions deviate from expected patterns.

Active learning frameworks use human feedback to continuously improve models in areas where they struggle most. When compounding errors occur, these systems capture the failure cases and incorporate them into retraining datasets, systematically eliminating vulnerability patterns.

Designing Effective Review Workflows

Human review interfaces should present predictions alongside confidence scores and explanations of model reasoning. This context enables reviewers to quickly assess whether errors stem from upstream problems or represent genuine ambiguity in the current stage.

Feedback from human reviewers should flow both forward and backward through the pipeline. Correcting a downstream prediction often reveals the need to adjust upstream models, creating improvement opportunities across the entire dependency chain.

🚀 Emerging Technologies and Future Directions

Recent advances in machine learning offer new approaches to the compounding error challenge. Neural architecture search algorithms can automatically discover pipeline designs that minimize error propagation, exploring configuration spaces too vast for manual optimization.

Self-Healing Model Ecosystems

Research into autonomous systems that monitor and repair themselves shows promise for reducing compounding errors. These self-healing architectures detect performance degradation and automatically trigger corrective actions, from parameter adjustments to complete model replacement.

Federated learning approaches enable models to adapt to local conditions while sharing insights about error patterns across deployments. This collective intelligence helps identify and mitigate compounding errors that might remain hidden in isolated systems.

💡 Practical Implementation Roadmap

Organizations seeking to address compounding errors should follow a structured approach. Begin with comprehensive auditing of existing model dependencies, mapping data flows and identifying critical paths where errors could cascade most severely.

Implement baseline monitoring across all pipeline stages, establishing metrics that capture both individual model performance and end-to-end system behavior. This visibility provides the foundation for identifying problems and measuring improvement.

Phased Enhancement Strategy

Start with low-hanging fruit by adding confidence propagation to existing pipelines. This relatively simple change provides immediate benefits without requiring architectural redesign. Next, introduce circuit breakers at strategic points to contain identified vulnerability hotspots.

Gradually evolve toward more sophisticated solutions like joint training and adversarial hardening as organizational capabilities mature. This incremental approach balances immediate risk reduction with long-term architectural improvements.

🎓 Building Organizational Competency

Technical solutions alone cannot solve the compounding error problem. Organizations need cultural and procedural changes that prioritize pipeline-level thinking over individual model optimization. Cross-functional teams should include members responsible for different pipeline stages, fostering communication and shared understanding of dependencies.

Regular dependency reviews should become standard practice, examining how models interact and identifying emerging coupling risks. Documentation should explicitly describe expected input characteristics and confidence distributions, creating contracts between pipeline components.

Skills and Knowledge Development

Data science teams need training in systems thinking and error analysis techniques specific to model chains. Understanding how errors propagate requires different mental models than optimizing isolated models, and many practitioners lack exposure to these concepts.

Investing in tools and frameworks that support pipeline development creates lasting capability improvements. Libraries that handle confidence propagation, monitoring, and testing of model chains reduce the implementation burden and encourage best practices.

🔐 Ensuring Long-Term Reliability

Addressing compounding errors is not a one-time fix but an ongoing commitment to system health. As models evolve and business requirements change, new dependencies emerge and existing safeguards may become insufficient. Continuous vigilance and proactive maintenance keep error propagation under control.

Regular stress testing should challenge pipelines with adversarial inputs designed to trigger compounding failures. These exercises reveal weaknesses before they cause production incidents and validate that implemented safeguards function as intended.

The journey toward reliable model-dependent systems requires acknowledging complexity rather than simplifying it away. By understanding how errors compound, implementing strategic safeguards, and maintaining constant vigilance, organizations can harness the power of interconnected models while controlling the risks they introduce. This balanced approach delivers the sophisticated capabilities modern applications demand with the reliability users deserve.

Toni Santos is a data visualization analyst and cognitive systems researcher specializing in the study of interpretation limits, decision support frameworks, and the risks of error amplification in visual data systems. Through an interdisciplinary and analytically-focused lens, Toni investigates how humans decode quantitative information, make decisions under uncertainty, and navigate complexity through manually constructed visual representations. His work is grounded in a fascination with charts not only as information displays, but as carriers of cognitive burden. From cognitive interpretation limits to error amplification and decision support effectiveness, Toni uncovers the perceptual and cognitive tools through which users extract meaning from manually constructed visualizations. With a background in visual analytics and cognitive science, Toni blends perceptual analysis with empirical research to reveal how charts influence judgment, transmit insight, and encode decision-critical knowledge. As the creative mind behind xyvarions, Toni curates illustrated methodologies, interpretive chart studies, and cognitive frameworks that examine the deep analytical ties between visualization, interpretation, and manual construction techniques. His work is a tribute to: The perceptual challenges of Cognitive Interpretation Limits The strategic value of Decision Support Effectiveness The cascading dangers of Error Amplification Risks The deliberate craft of Manual Chart Construction Whether you're a visualization practitioner, cognitive researcher, or curious explorer of analytical clarity, Toni invites you to explore the hidden mechanics of chart interpretation — one axis, one mark, one decision at a time.